pip install rlmflow

tldr

rlmflow turns Recursive Language Models into inspectable execution graphs. It’s a Python library for writing RLM agents where every query, action, observation, delegation, wait, resume, and result is a typed Pydantic state, and a run is a recursive Graph of agent trajectories.

The whole engine is one transition: step(graph) → graph. The trace and the execution are the same data structure (there is no separate “tracing mode” to enable), so the same run renders as a Rich live tree, a Mermaid diagram, a Gantt swimlane, or a Gradio step-through viewer, all from one-line projections of the graph.

That graph allows you to inspect each subagent, replay from a checkpoint, fork a workspace, and edit states before continuing. We’ll walk through those moves on a real coding-agent run shipped with the repo.

Introduction

Context rot is the failure mode every practitioner has hit: a Claude Code session that “gets dumber”, a Cursor chat that forgets the file you opened thirty messages ago, a research agent that can quote your prompt back but can’t use it. Anthropic defines it as recall degrading as the window grows: frontier models advertise 200k–1M tokens and degrade long before that. The tokens fit, the model just can’t reason over them all at once. Easy benchmarks miss this (RULER is constant-complexity and frontier models score 90%+), but Chroma, OOLONG, and lost-in-the-middle all show real degradation well below the nominal limit.

Existing fixes (bigger windows, retrieval, summarization, context-folding) each pick a decomposition strategy for the model, ahead of time. Even though they work in practice, they are also exactly the pattern Sutton’s Bitter Lesson warns about: hard-coded human structure that wins in the short run but loses in the long run to general methods that scale with compute. As capability improves, the fixed strategy becomes the ceiling.

Recursive Language Models

flip that. An LLM sits in a Python REPL with the long context bound

as a variable, and a single extra primitive (rlm_delegate)

lets it spawn a fresh sub-agent with its own window. From there the

model peeks, slices, greps, or recursively delegates only when it

decides to. RAG retrieves; RLMs investigate. In the original RLM

evaluation, the reported case is strong: RLM(GPT-5-mini) beats raw GPT-5

on a tough long-context benchmark at roughly the same API cost, and

holds up at 10M+ token corpora no direct baseline can fit

(post,

paper,

rlm-minimal,

verifiers).

But as the number of sub-agents grows, the tree gets hard to observe and control: parents spawn children, children spawn more children, results return upward, and a flat transcript hides almost everything you’d want to ask of the run. That’s where rlmflow comes in, representing sprawling trees of recursive agents as inspectable, controllable graphs.

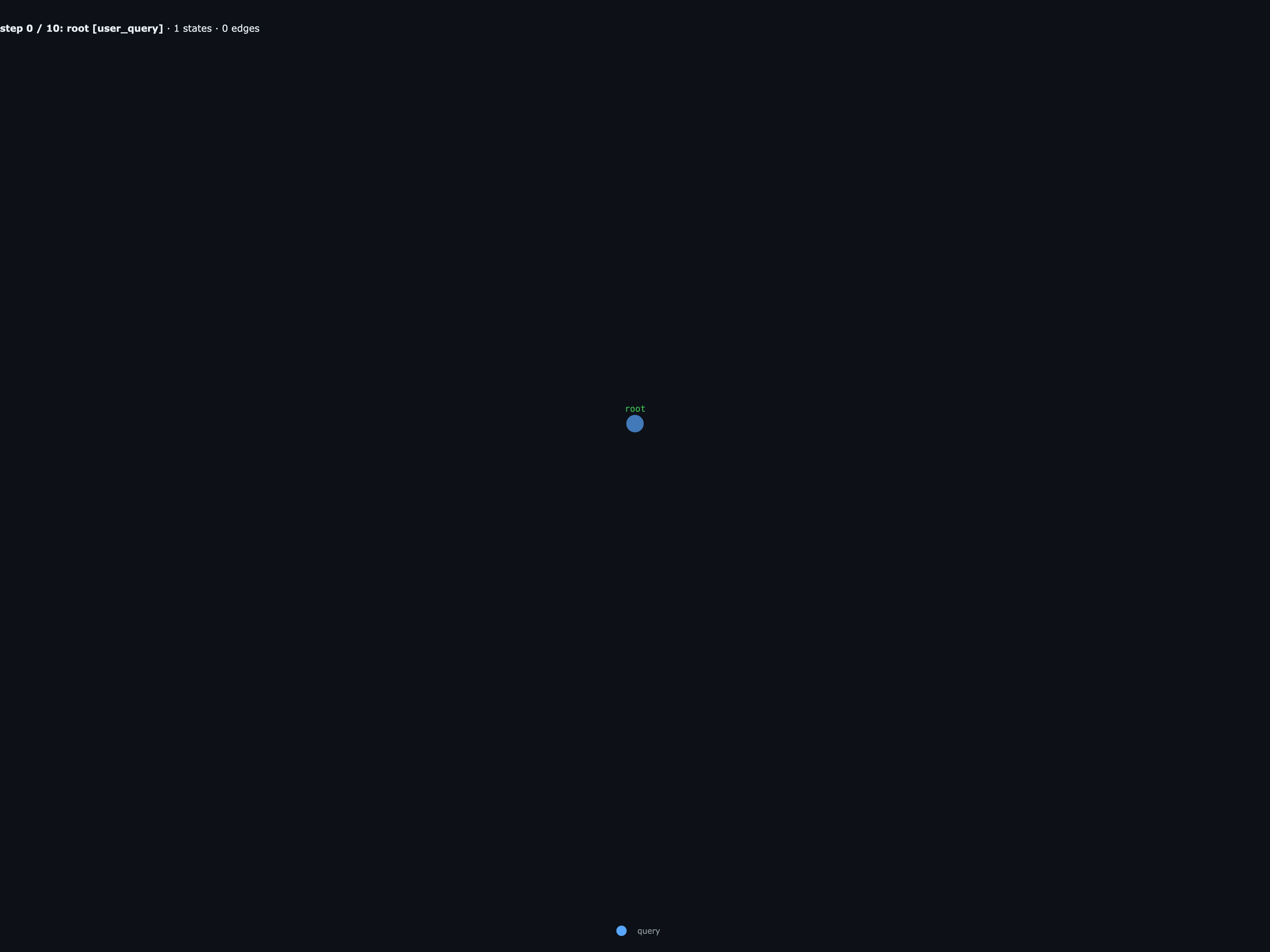

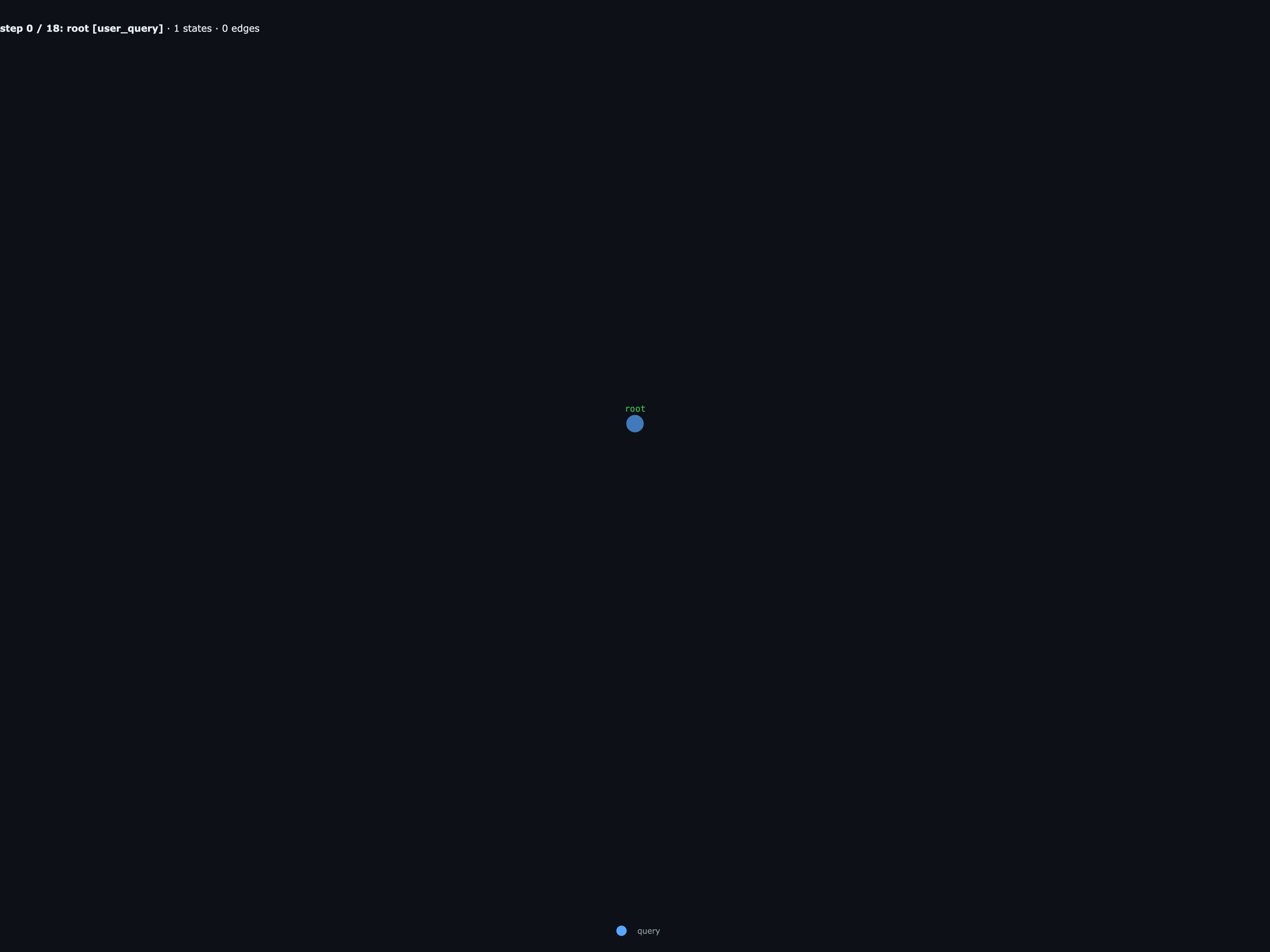

RLMs as graphs

To better understand what this means, start with the canonical RLM demo: needle-in-a-haystack. The context is a huge synthetic document, and the goal is simple: find the secret code hidden inside it.

The root agent looks at the document, decides not to load the whole thing into context, and splits the haystack across a few sub-agents:

- one child scans the first third for the needle phrase,

- another scans the middle third,

- a third child scans the final third, finds several near-matches, and spawns two smaller children to inspect the candidate windows,

- a verifier child checks the candidate code against the original question,

- the root agent returns the final code.

Even though this is a small example, it gets messy fast: the root has children, and one of those children has children of its own.

Each child is recursive: it has the same tools, a REPL interface, and the ability to spawn more child RLMs through a rlm_delegate(name, query, ctx) function. Context is a variable ctx (globally CONTEXT). The RLM runs until it calls done(value), where value is returned as a string output for the original rlm_delegate call. This is very elegant and clean, but it also means that sub-delegation calls are not visible to the parent agent:

That’s the core problem with vanilla RLMs: a single

rlm_delegate() call can hide an entire recursive subtree of LLM work,

and nothing about that subtree survives the return. Children can

delegate to children that can delegate to more children, and all the parent

ever gets is a str. When the answer is wrong, you can’t tell

which level of the recursion went off the rails; when the answer is

right, you can’t tell whether it was right for the right reason. The

abstraction is too clean: the act of delegating throws away exactly

the structure you’d want to debug, evaluate, or steer.

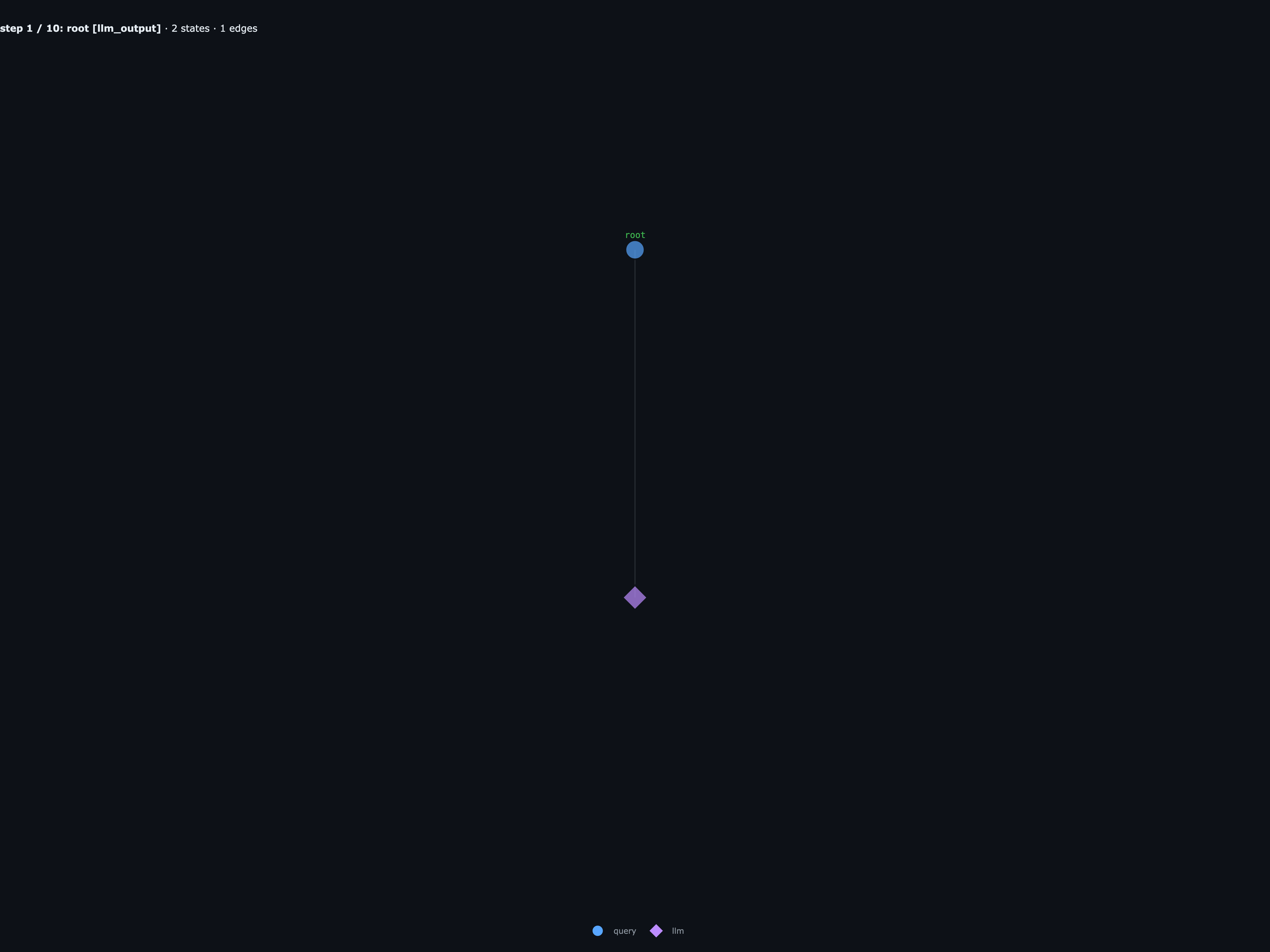

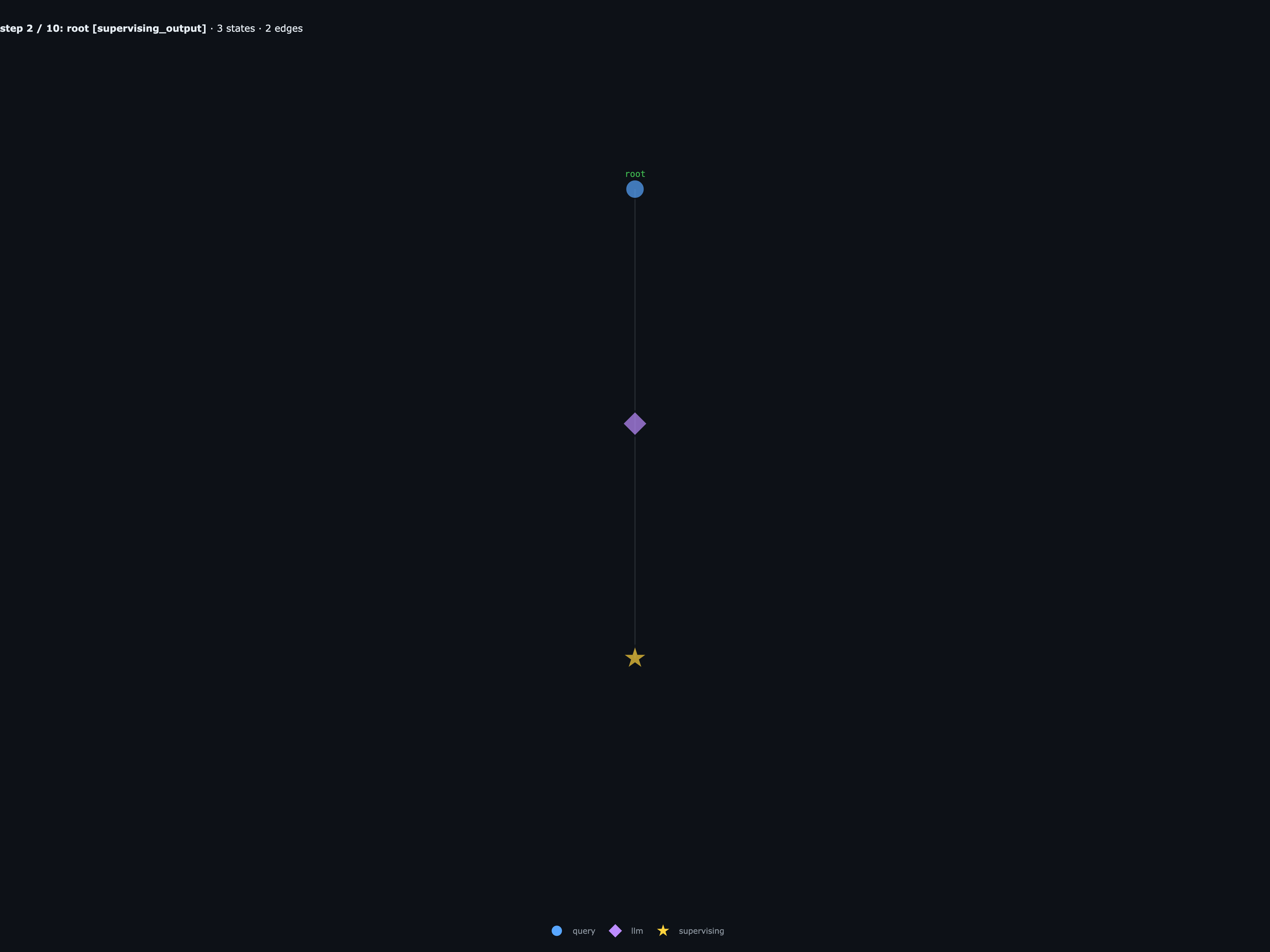

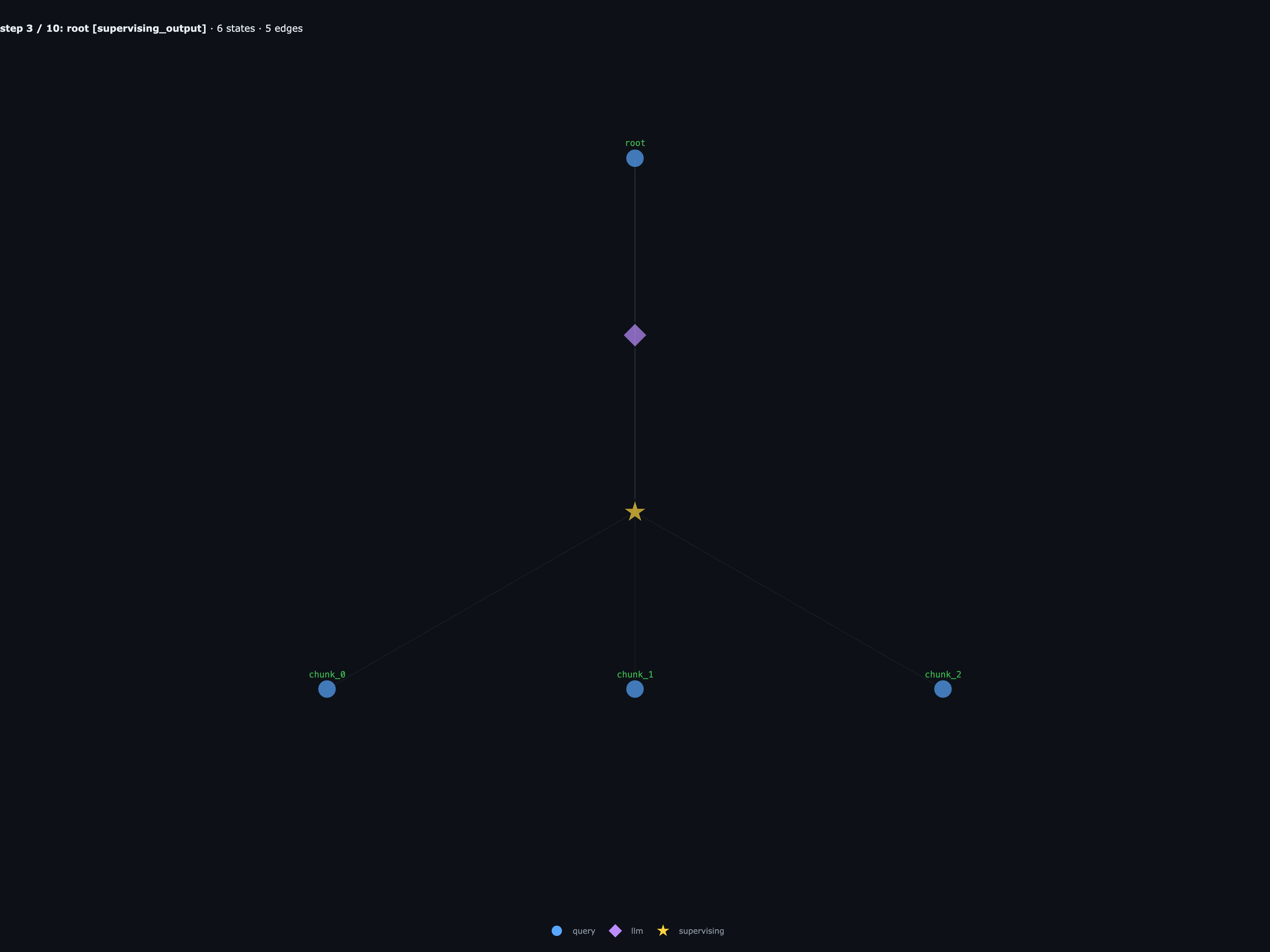

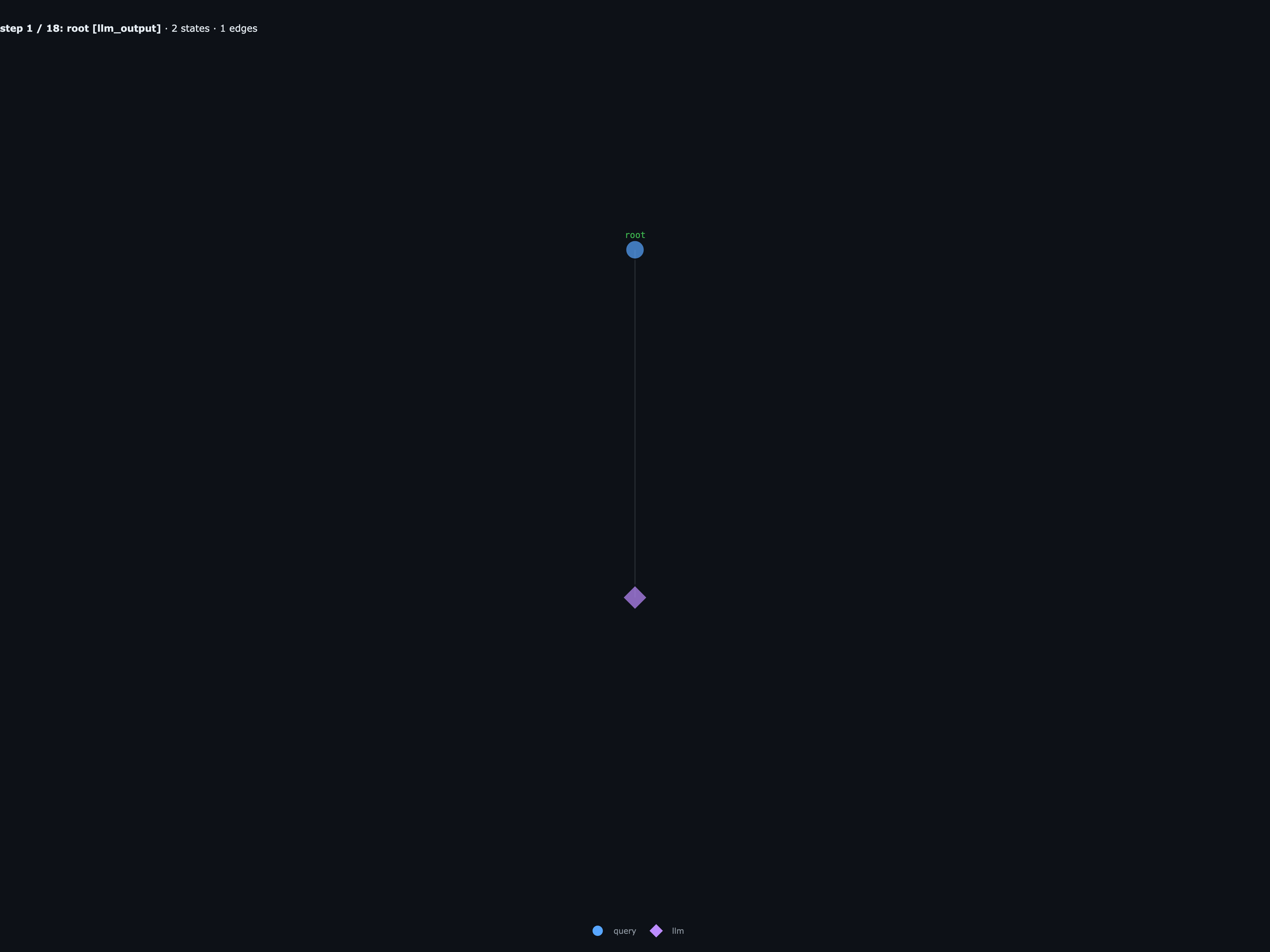

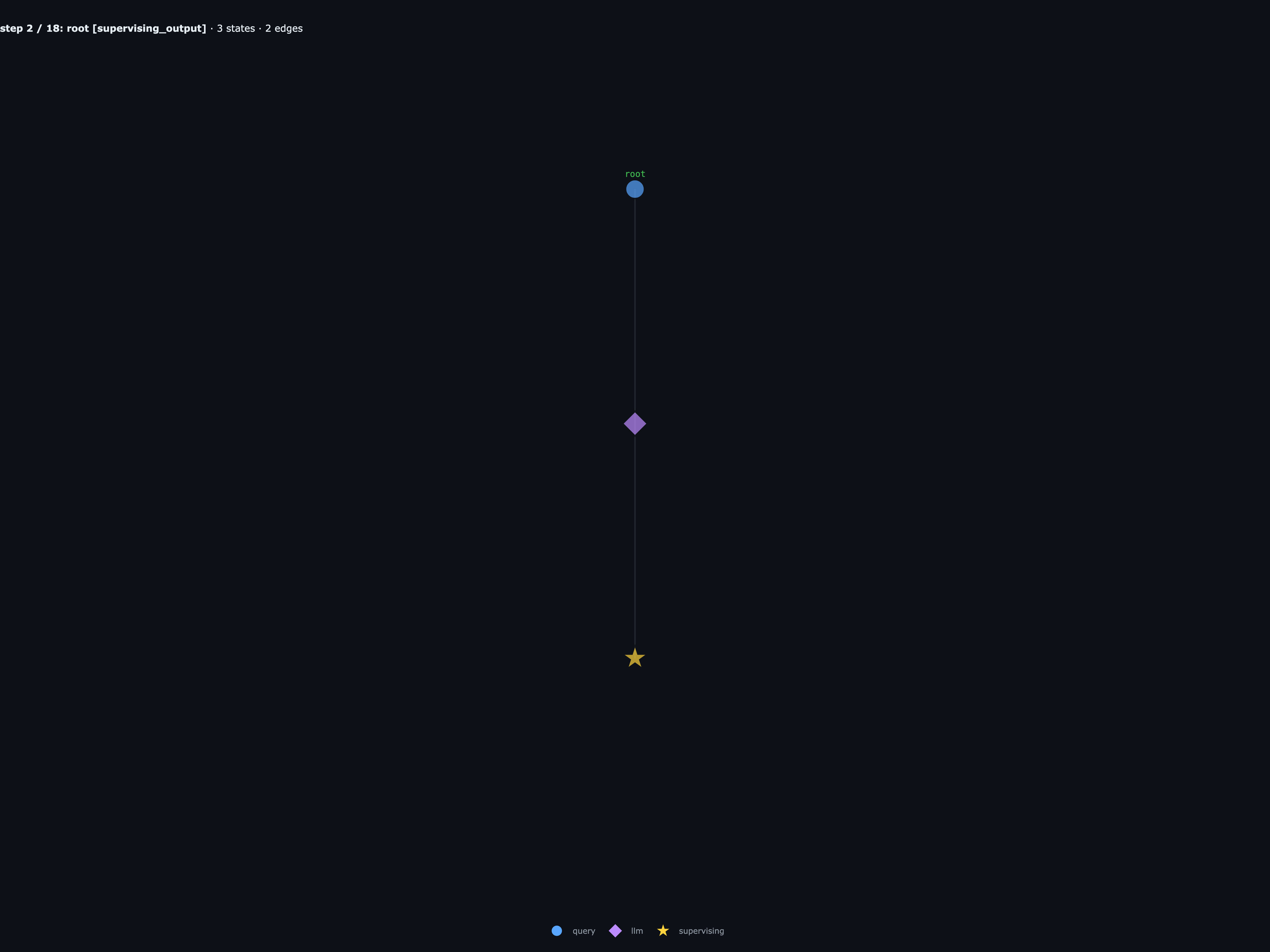

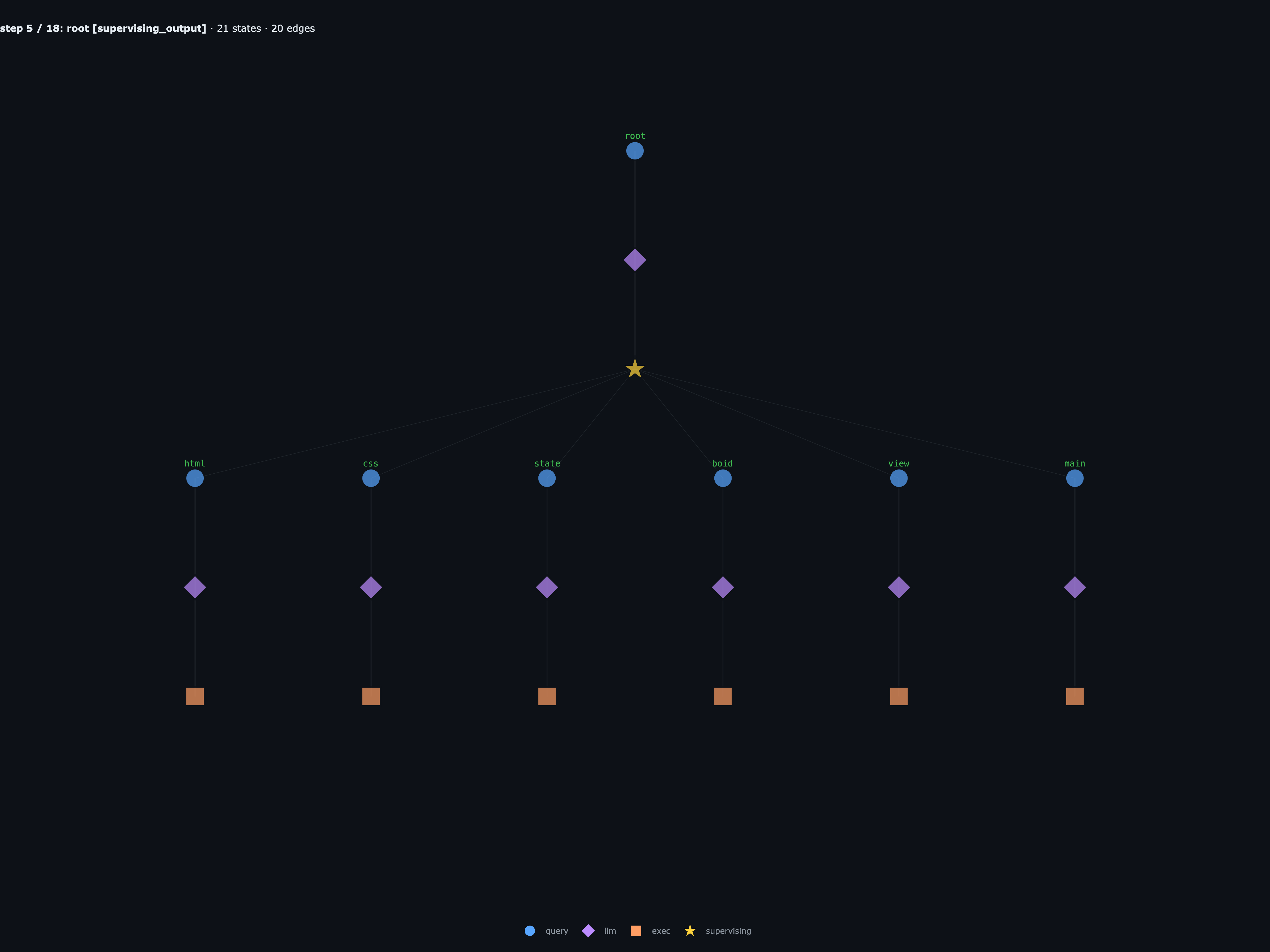

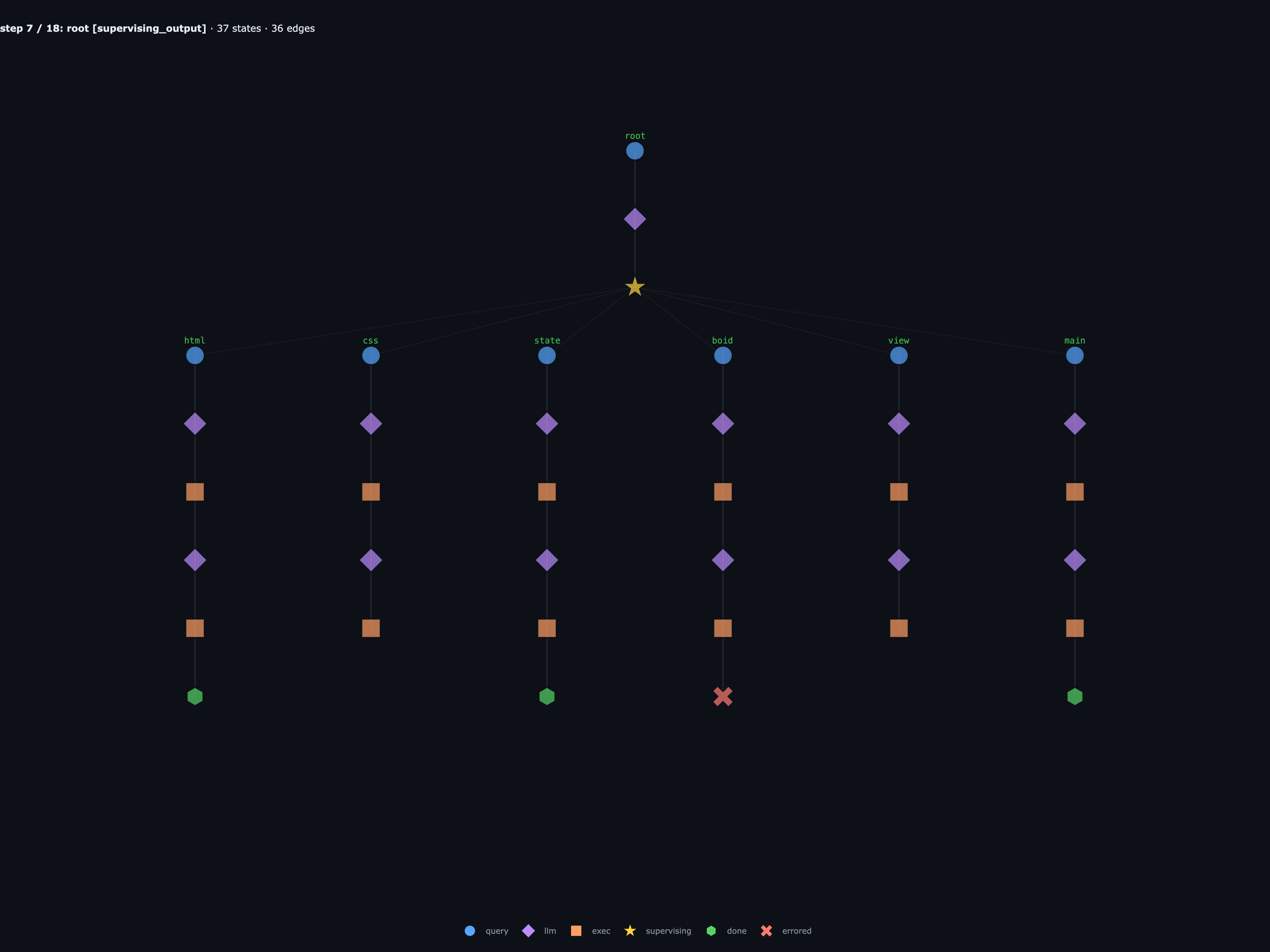

rlmflow keeps that structure at every step: every recursive call is a sub-Graph, and every turn inside an agent is a typed Node state that you can step through, inspect, and replay:

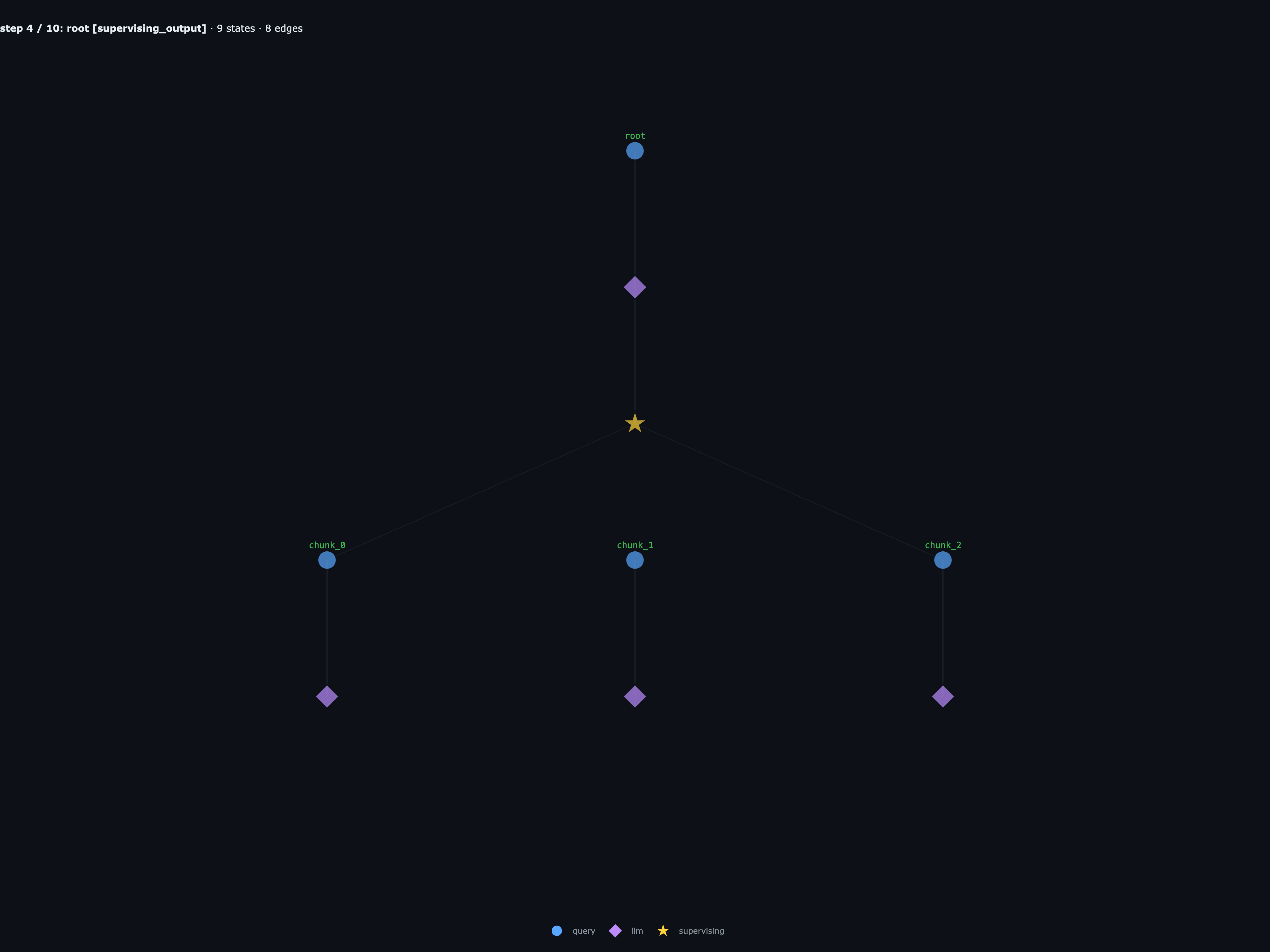

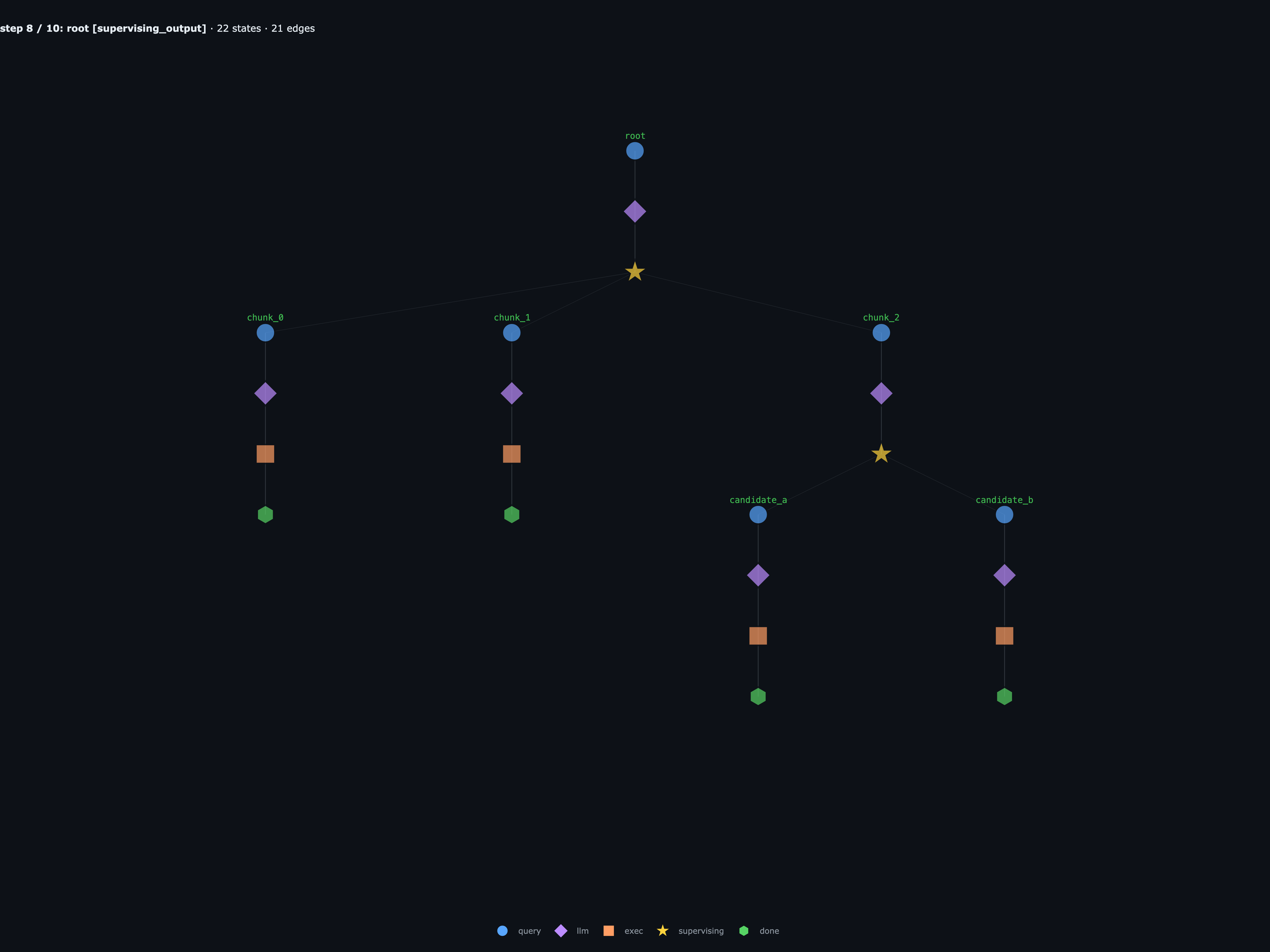

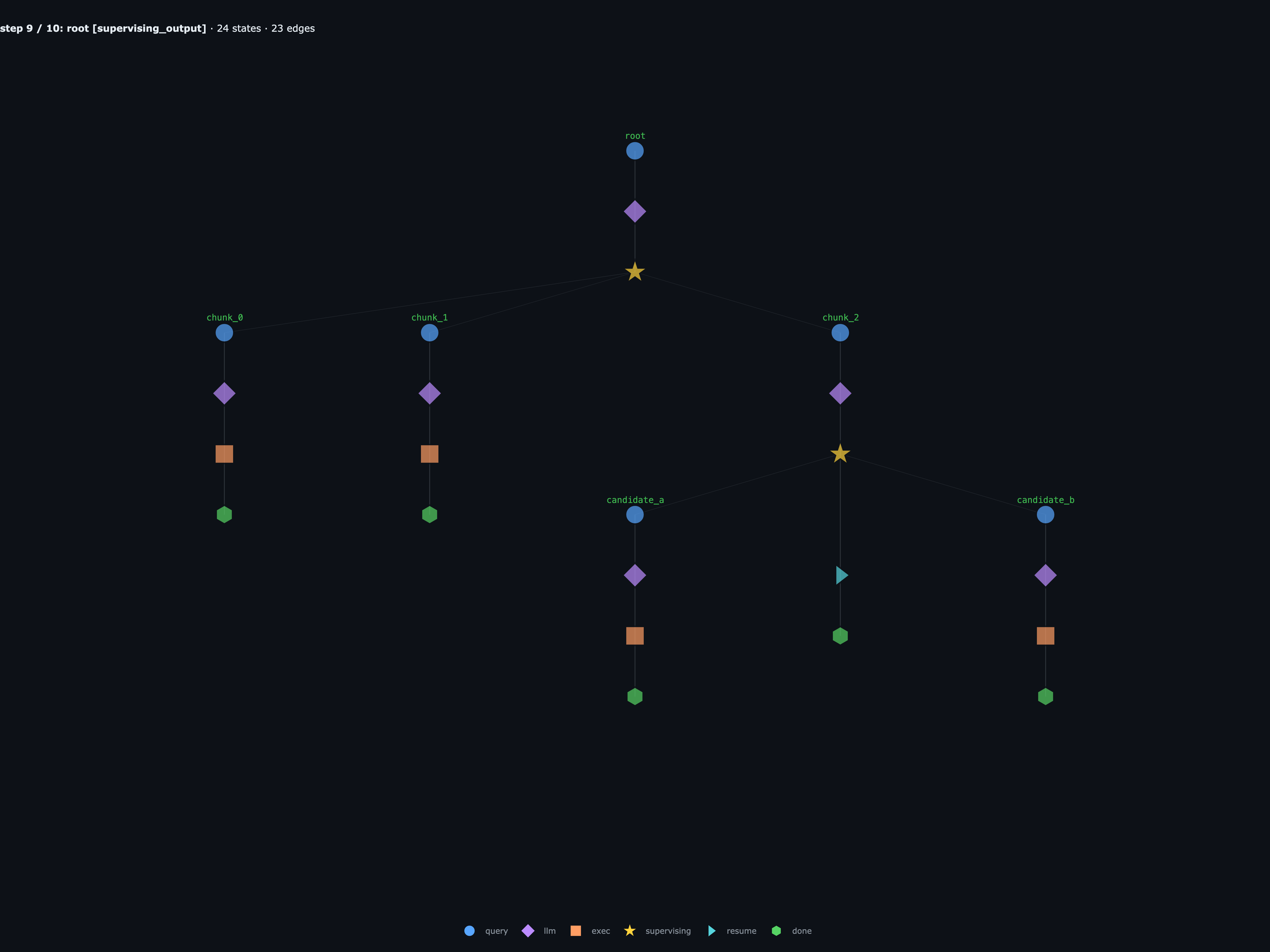

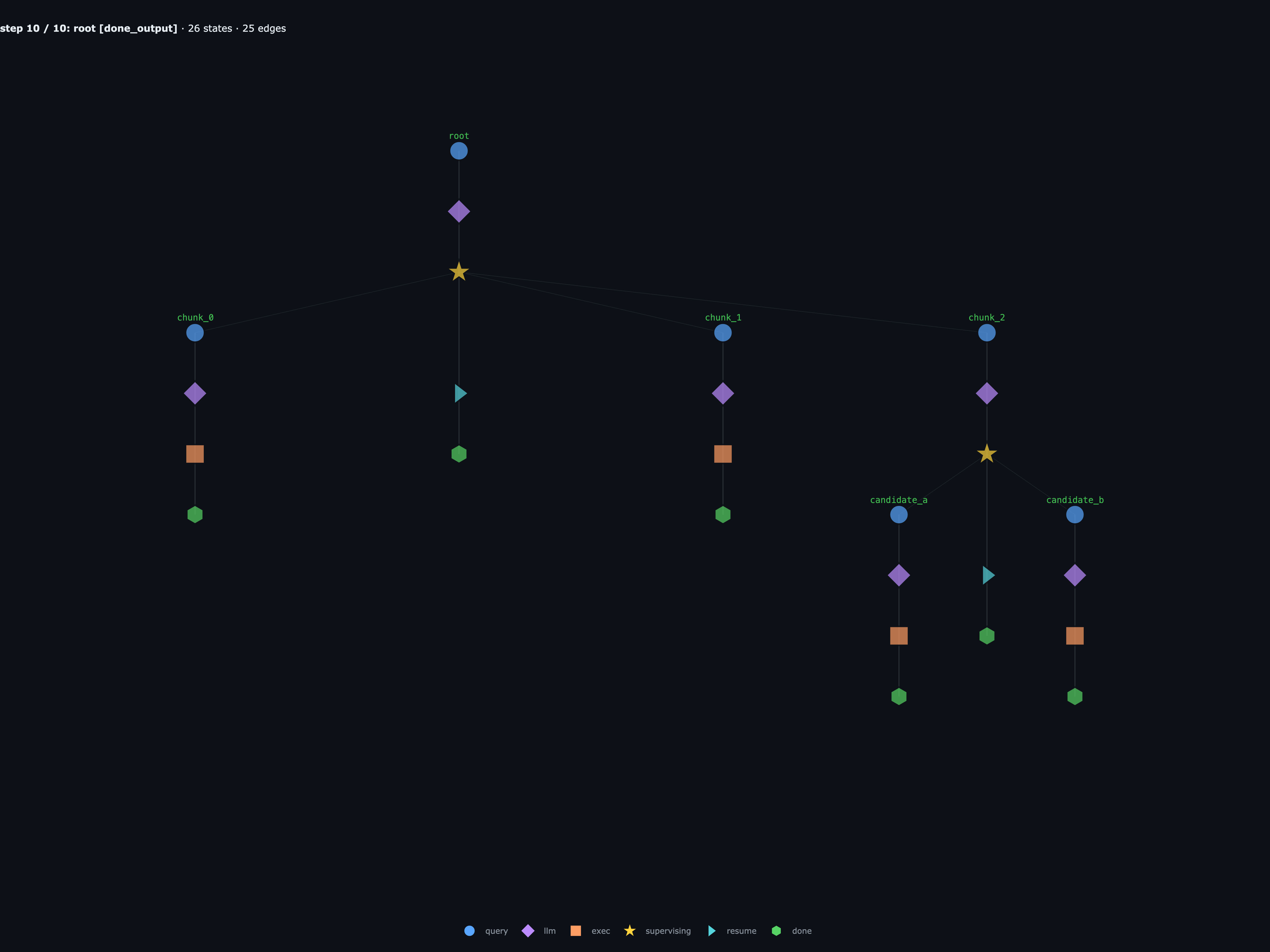

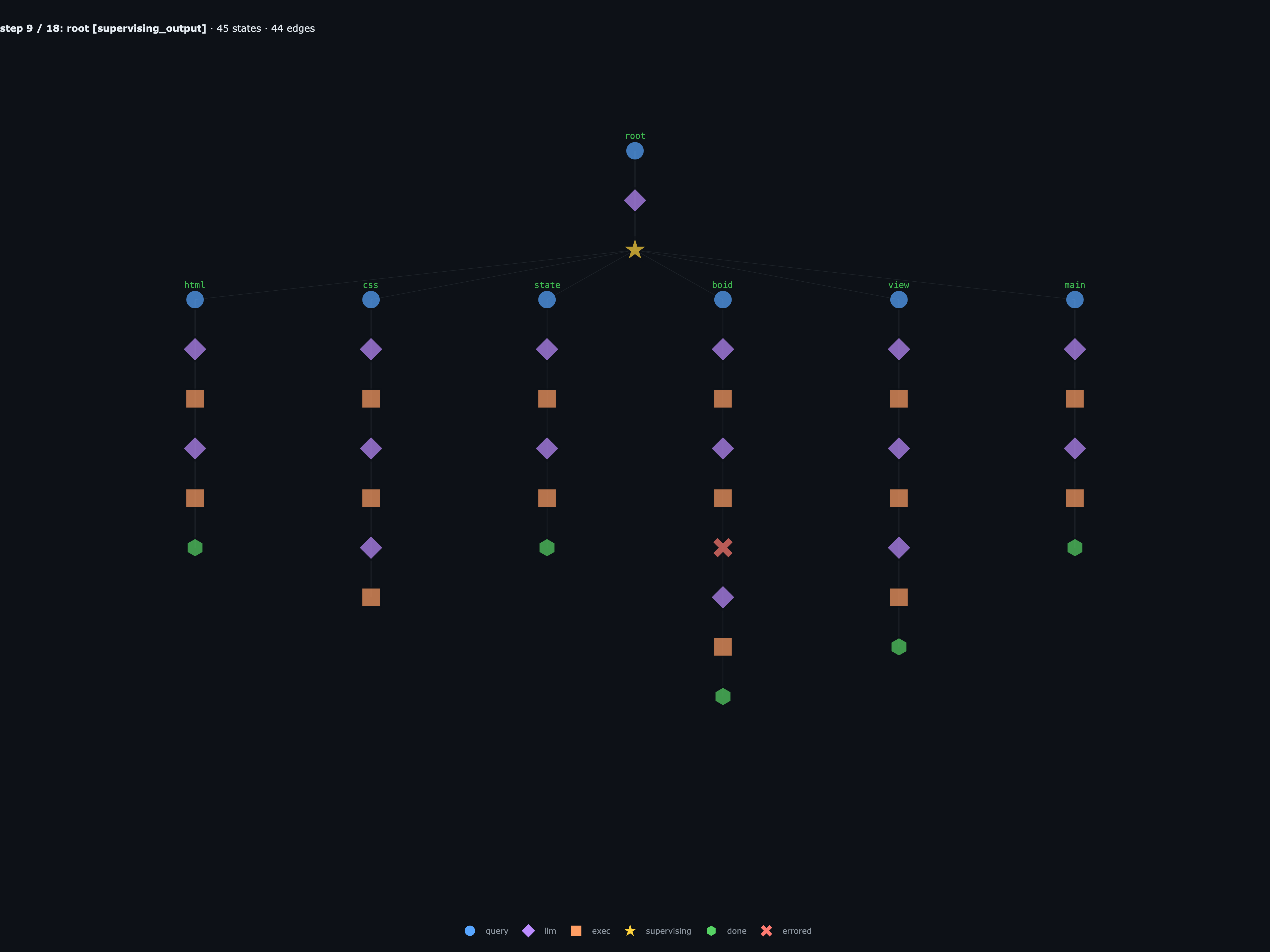

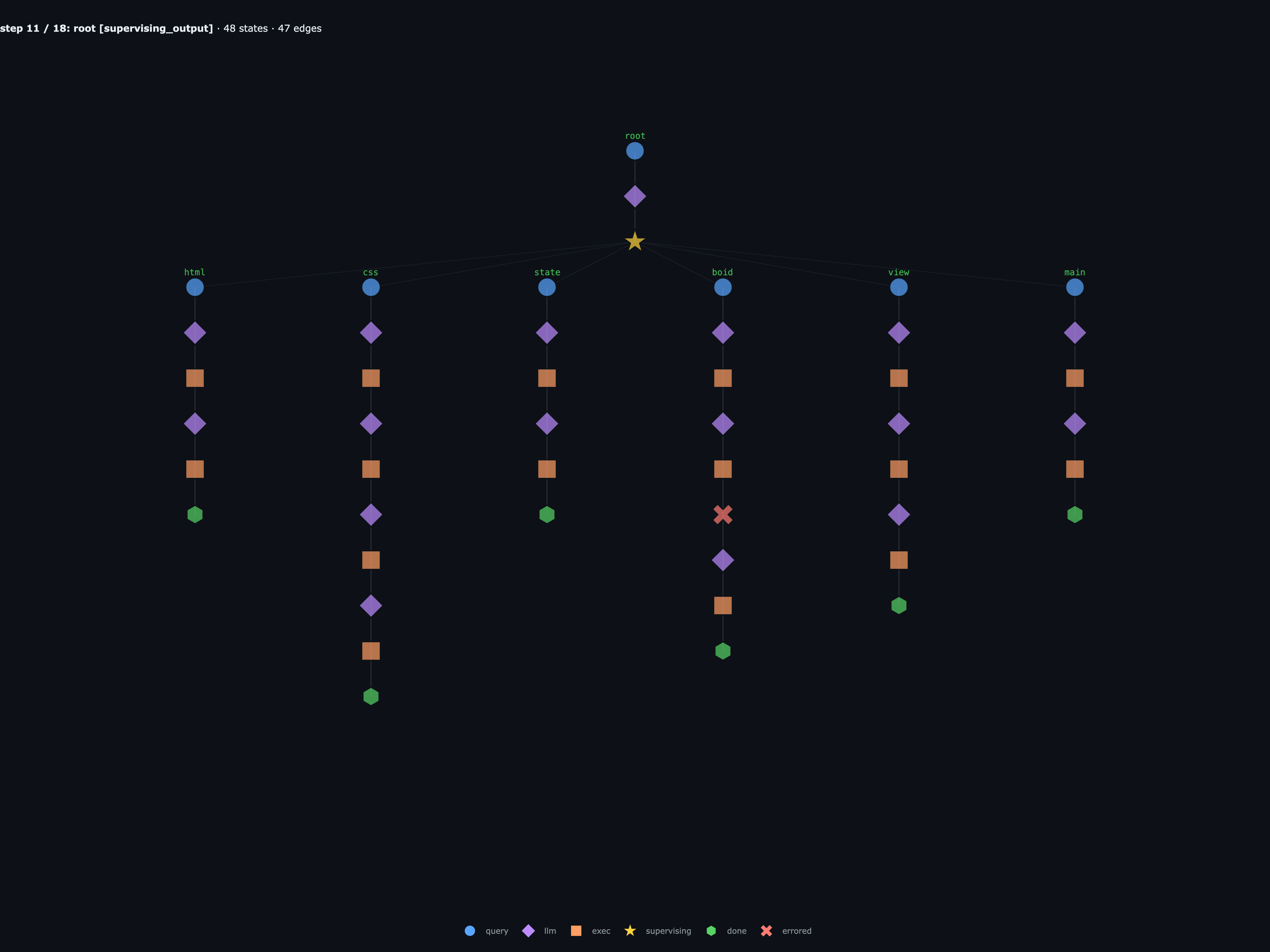

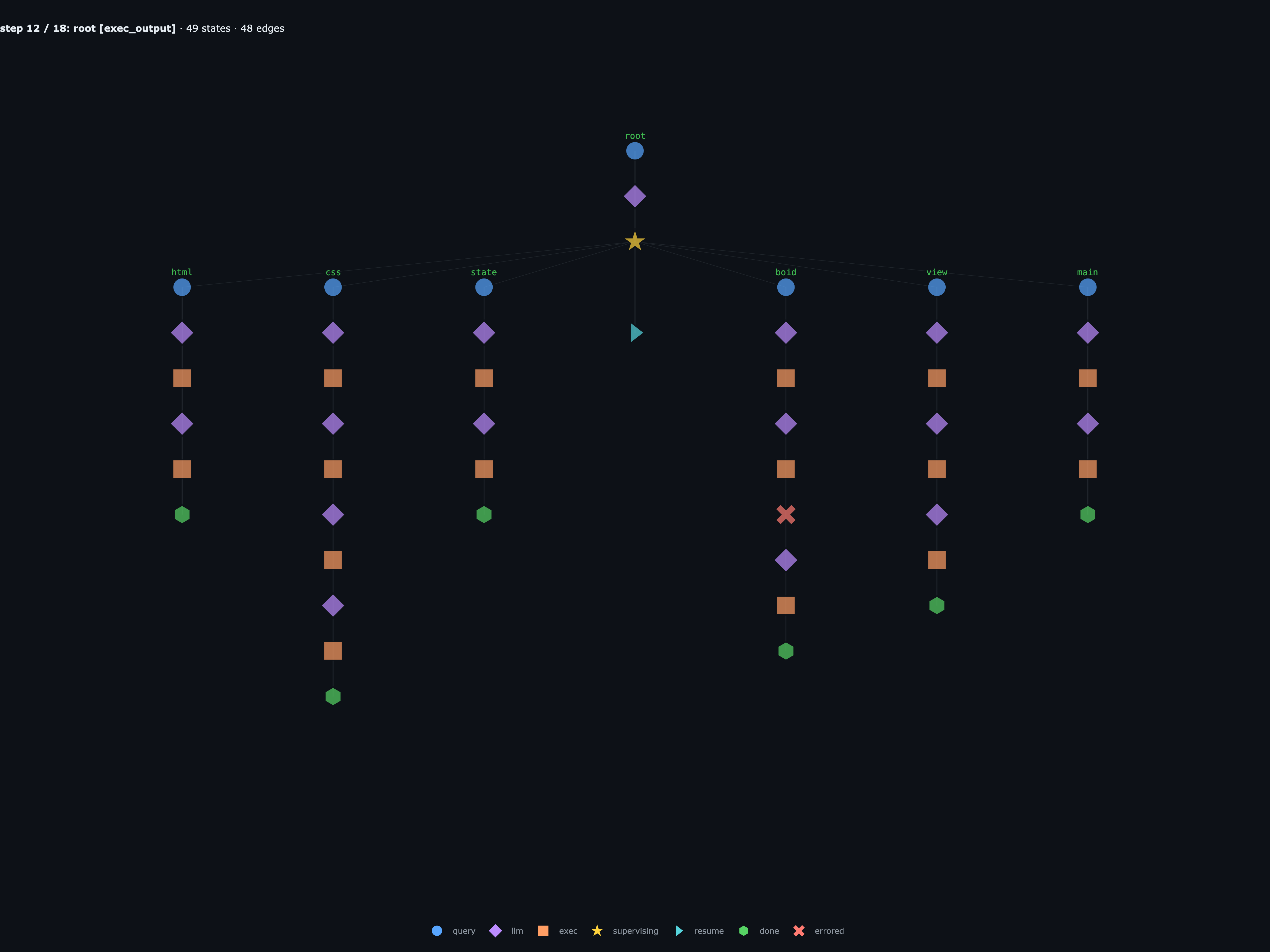

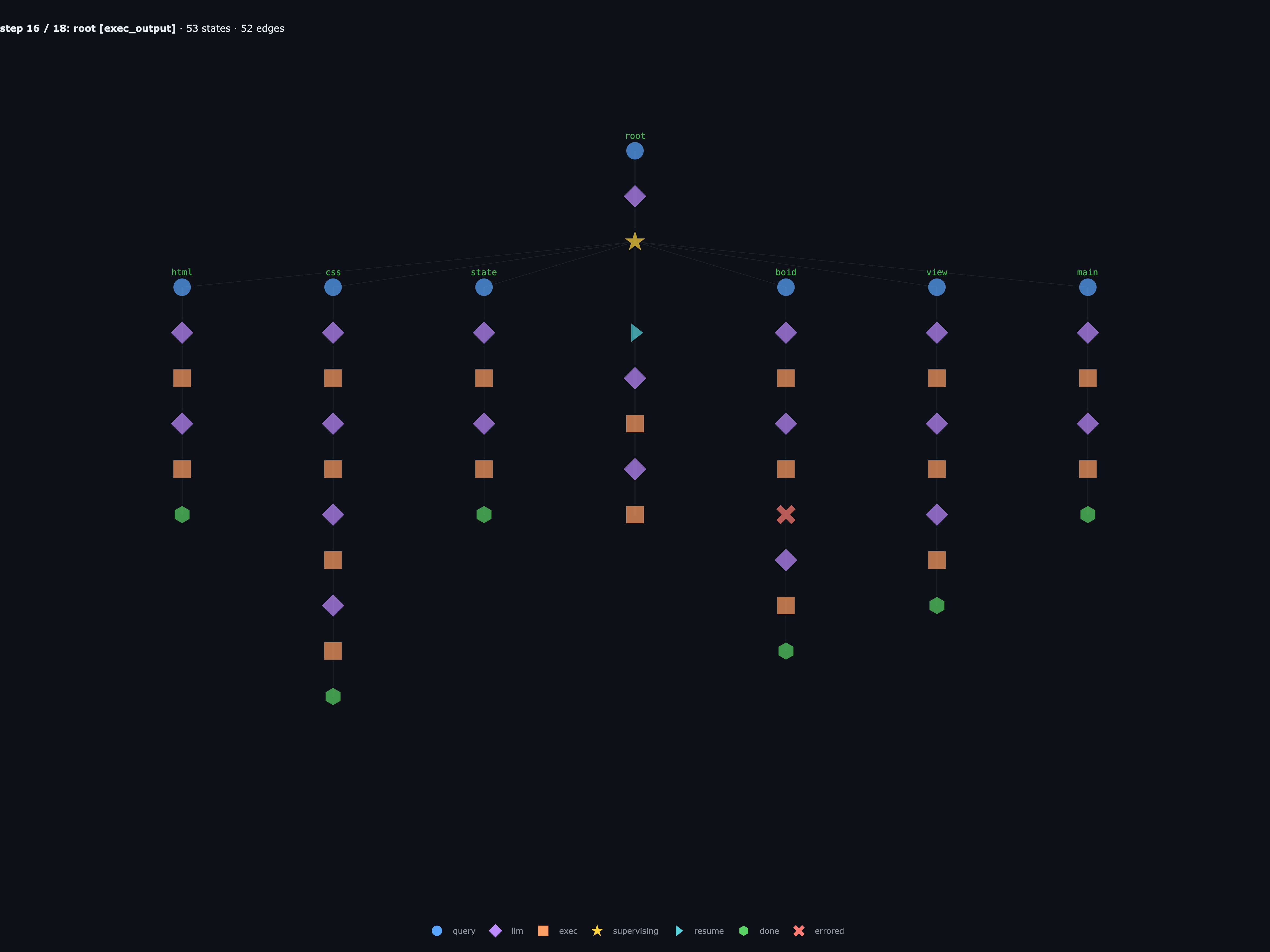

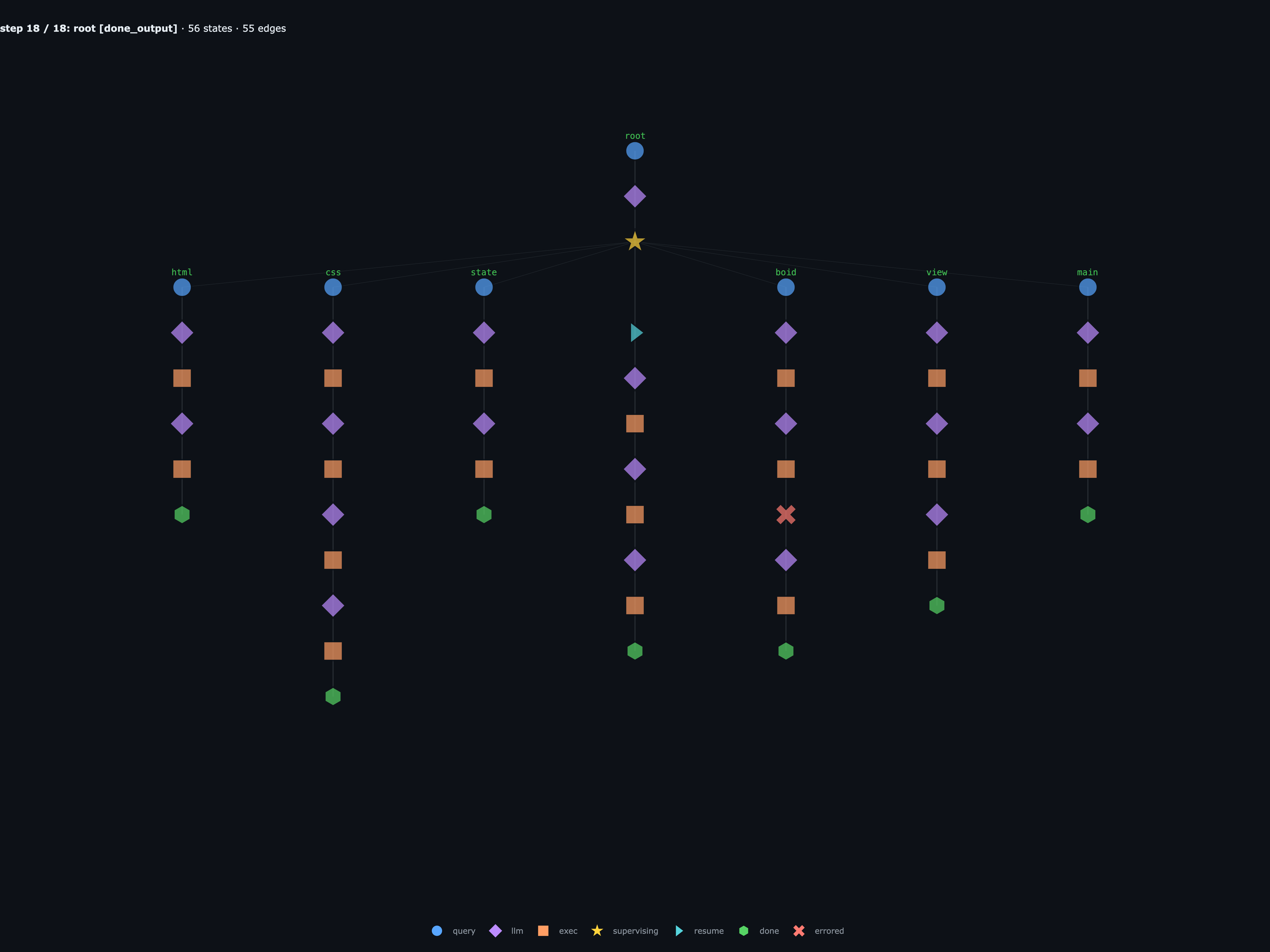

Same run, but now with step-by-step execution. When root hits its supervising

node, the run pauses there, and you can see exactly what’s runnable

next: root.chunk_0, root.chunk_1, root.chunk_2. They each

advance on their own, so the parallel work actually shows up as

parallel work instead of getting flattened into one linear

conversation.

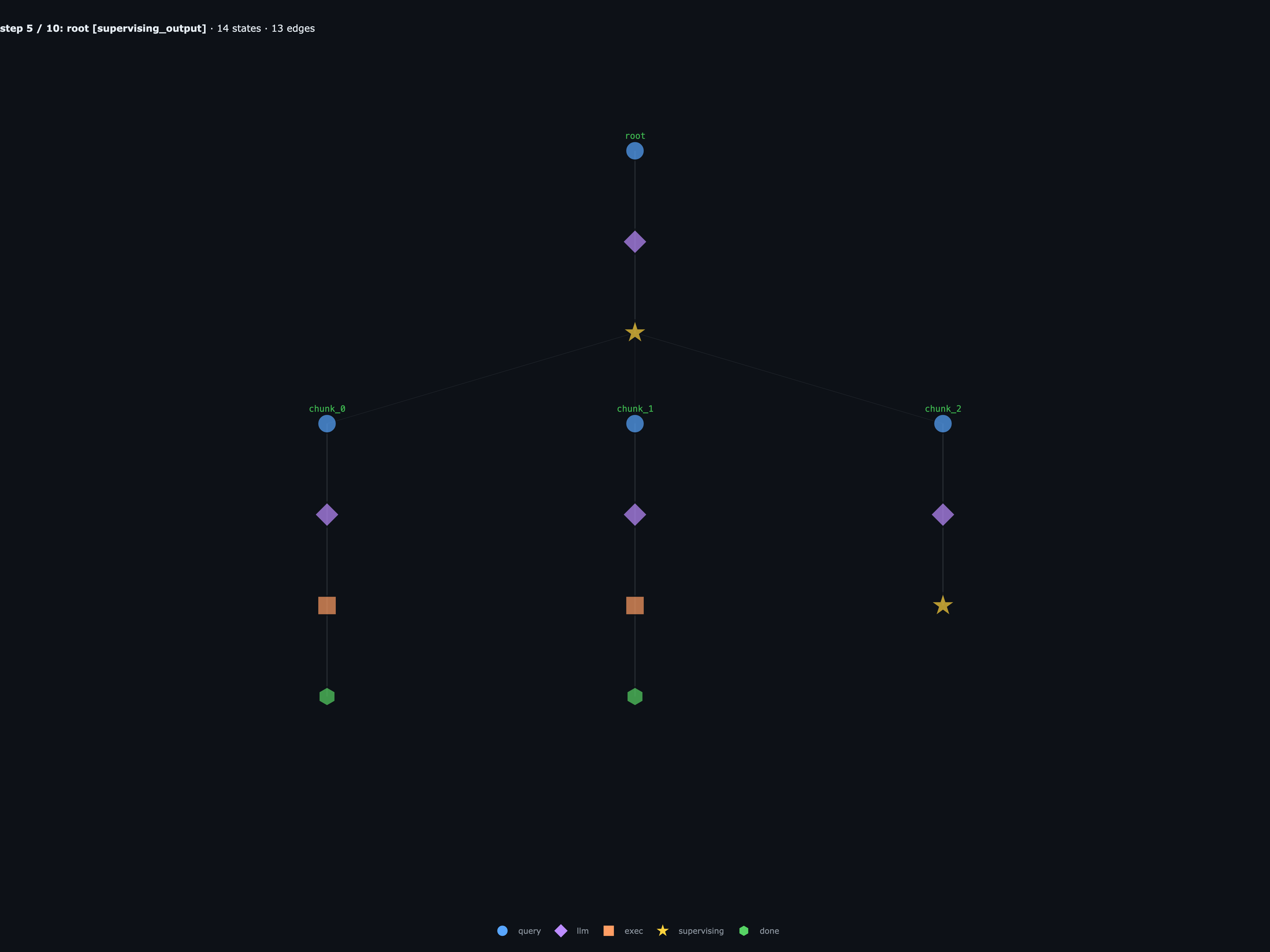

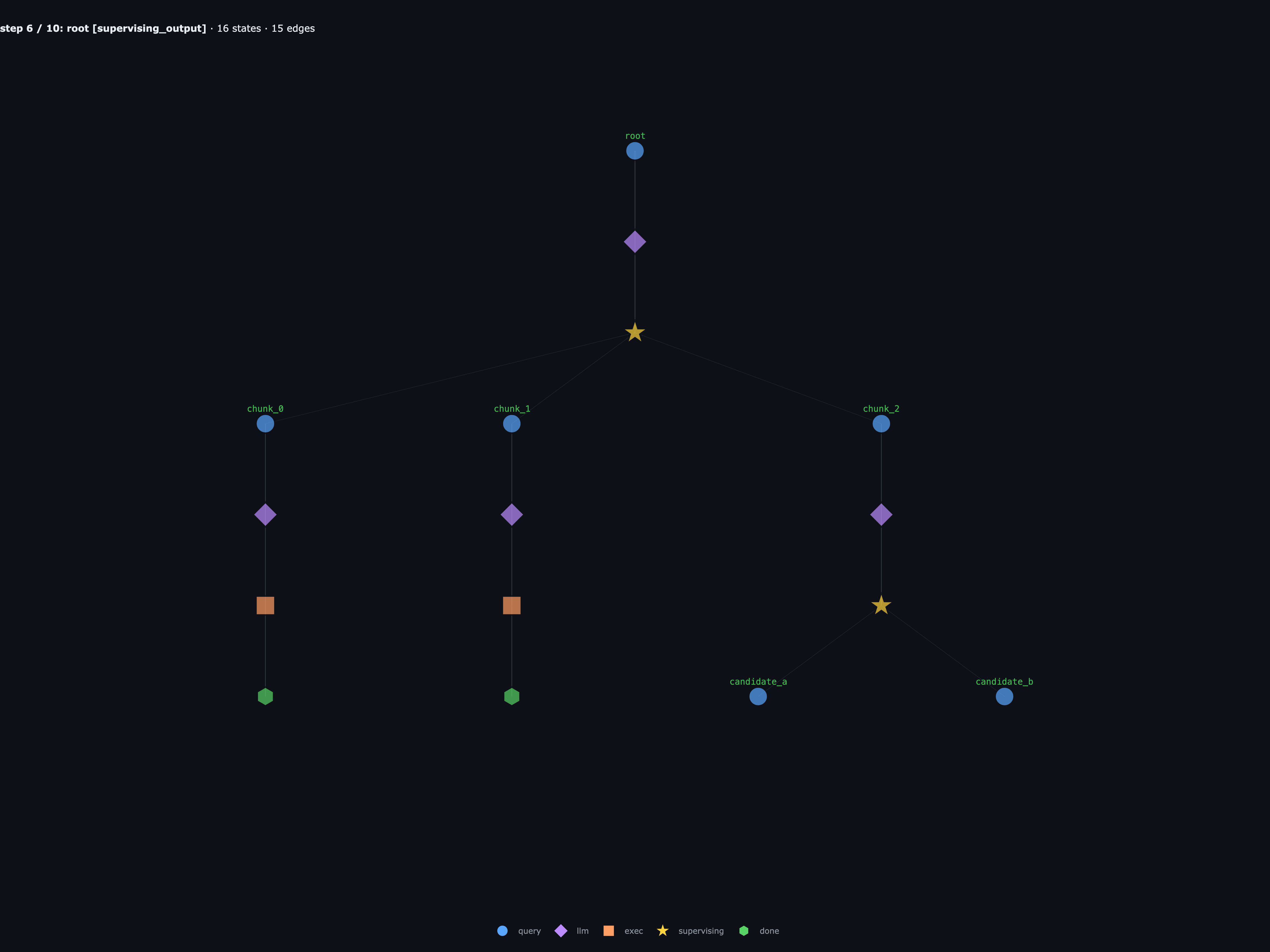

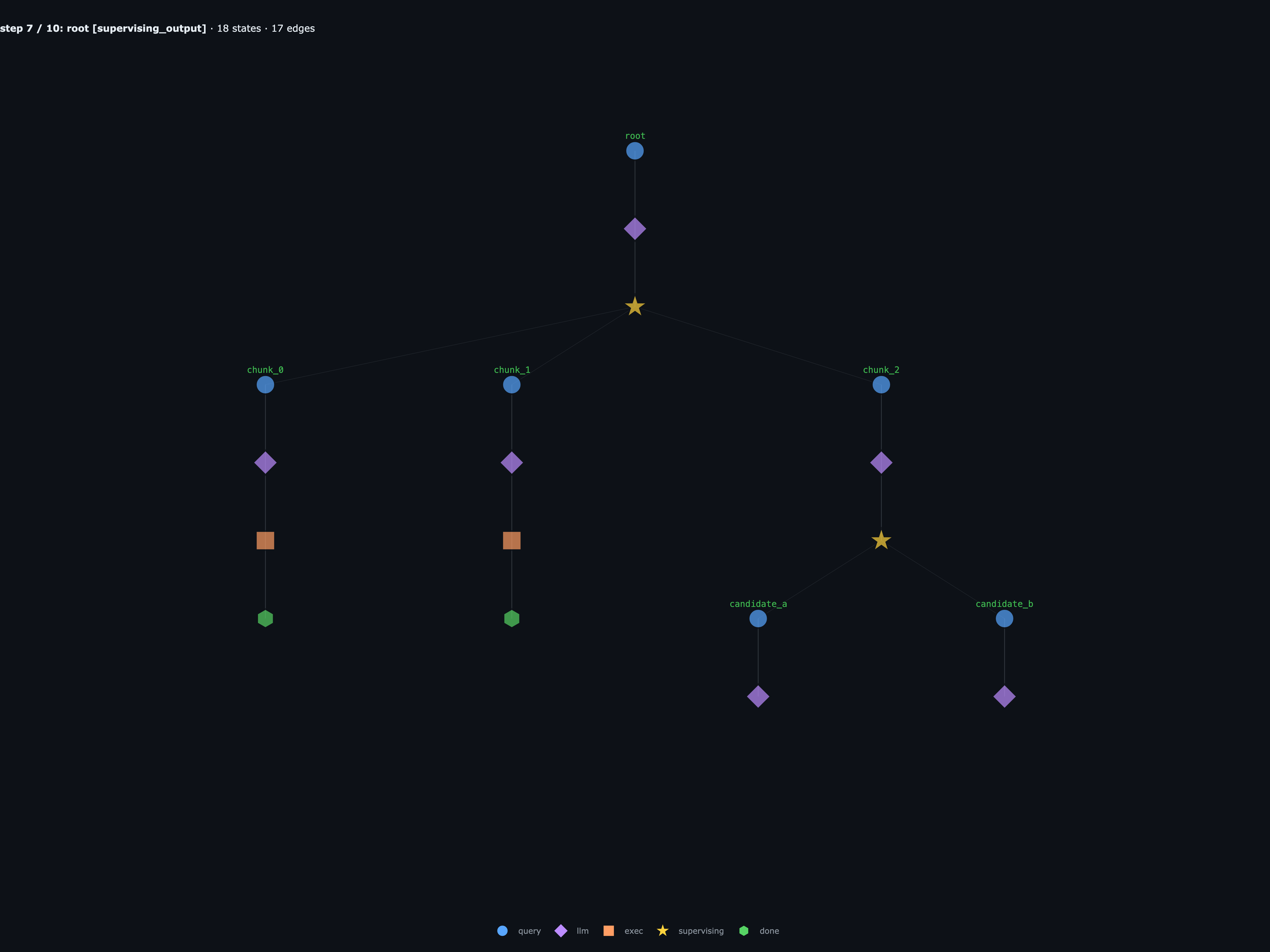

Same thing happens a level down. root.chunk_2 hits its own

supervising node, and root.chunk_2.a and root.chunk_2.b take over

while both their parents sit waiting. Once those two finish,

root.chunk_2 picks back up, returns 84721, and only then does

root resume to verify the answer.

The key property of this graph abstraction is that no matter how deep the graph gets, you keep control over each step of each RLM and sub-RLM call. A failed branch is not just an opaque string return; you can inspect the exact child, turn, source or error state, resume point, and even fork the graph.

rlmflow internals

Similar to the original RLM implementation, we inject a stateful Python REPL with a couple core features — a CONTEXT variable, a SESSION variable, and a recursive rlm_delegate(...) + rlm_wait primitive, all operating through a stateful graph structure.

Spawning recursive agents

The RLM uses rlm_delegate(...) and await rlm_wait(...) as its primary method of spawning children. rlm_delegate spawns a child with its own fresh context and returns a handle; await rlm_wait(*handles) parks the parent until those children settle, bubbling up messages from the children’s done(message) calls.

handle = rlm_delegate(

name="child_0",

query="scan this chunk",

context=child_ctx,

)

results = await rlm_wait(handle)

done("Chunk found!") # bubbled up to the parent

Because each child is its own Graph, the engine doesn’t drive them one at a time: every call to step(graph) advances all runnable leaves in parallel, and a parent unparks the moment its rlm_wait set is done.

That await rlm_wait(...) isn’t sugar — it’s a real Python coroutine suspension point. Each REPL block is wrapped in an async def shell and driven by the engine through coro.send():

async def __rlm_coro__():

h1 = rlm_delegate(name="search", query="...", context=chunk_a)

h2 = rlm_delegate(name="verify", query="...", context=chunk_b)

results = await rlm_wait(h1, h2) # suspend here

done(combine(results))

out = coro.send(None) # run until the await

# out is a WaitRequest([h1, h2]); the engine suspends the parent

# and the user can step the children until they're terminal

results = [c.result() for c in children]

coro.send(results) # resume; `results` is now the list

Concretely, one call to step(graph) on a tree with two leaves, one supervising parent, and one supervising grandparent looks like this — every leaf gets stepped at once, then the parents whose rlm_wait set has settled run on the next step:

Context and Session

Each agent gets two read-only views injected into its REPL: CONTEXT is the per-agent data slot (the long input passed in via context=..., sliced and grepped on demand), and SESSION is a window onto every other agent in the run — its tree, transcripts, and results — so a child can look up what a sibling already did instead of redoing the work:

# inspect this agent's context window

CONTEXT.info()

CONTEXT.read(start=0, end=None)

CONTEXT.lines(start=0, end=None)

CONTEXT.grep(pattern, max_results=50)

child_ctx = "This is child context..."

# inspect the raw messages of nodes / agents

SESSION.tree()

SESSION.list_agents()

SESSION.read("root.child_0")

SESSION.grep("needle", max_results=50)

The CONTEXT and SESSION variables are directly backed by a workspace, where rlmflow persists context views and per-agent session logs:

workspace/

├── context/ # per-agent context files

│ ├── root/

│ ├── root.<child_0>/

│ ├── root.<child_1>/

│ └── ...

├── graph.json # root id + registered agents

├── session/ # latest.json + session.jsonl per agent

│ ├── root/

│ ├── root.<child_0>/

│ ├── root.<child_1>/

│ └── ...

By default this is a filesystem, but in practice it can be any storage type.

Recursive Coding Agent

Here’s all you need for a fully observable recursive coding agent, built with rlmflow, equipped with recursive calling, grep, and filesystem operations:

from pathlib import Path

from rlmflow.llm import OpenAIClient

from rlmflow.rlm import RLMConfig, RLMFlow

from rlmflow.runtime.local import LocalRuntime

from rlmflow.tools import FILE_TOOLS

from rlmflow.workspace import Workspace

# Canonical example dir — node_basics.ipynb and viz_walkthrough.ipynb both

# read from here. Running this notebook live overwrites it.

WORKSPACE_DIR = Path("./notebook-coding-agent").resolve()

def build_agent(

workspace_dir: str | Path = WORKSPACE_DIR,

max_depth: int = 3,

max_iterations: int = 30,

) -> RLMFlow:

"""Construct a coding agent identical to examples/coding-agent/agent.py."""

workspace = Workspace.create(Path(workspace_dir).resolve())

runtime = LocalRuntime(workspace=workspace)

runtime.register_tools(FILE_TOOLS)

return RLMFlow(

llm_client=OpenAIClient("gpt-5"),

runtime=runtime,

workspace=workspace,

config=RLMConfig(max_depth=max_depth, max_iterations=max_iterations),

llm_clients={

"fast": {

"model": OpenAIClient("gpt-5-mini"),

"description": "Cheap model for smaller subtasks",

},

},

)

And the whole main loop:

query = ...

agent = build_agent(max_depth=3)

graph = agent.start(query)

while not graph.finished:

graph = agent.step(graph)

# print final graph

print(graph.tree())

Boids Simulation

In this example we want to generate a boids simulation in pure html and javascript.

TASK = """Create a runnable browser-based boids simulation in plain HTML, CSS, and JavaScript.

Requirements:

- The main runnable interface is `index.html`.

- Use separate files for:

- `index.html`

- `style.css`

- javascript files

- Do not use build tools or external libraries.

- Do not use ES modules; wire scripts with `<script src="..."></script>` tags.

- Render 100s of fast-moving, colorful boids on a 2D canvas. Do not add configurations, just the canvas.

- Verify that all files exist, script tags are ordered correctly, and the JavaScript has no obvious syntax/runtime wiring errors before returning.

"""

agent = build_agent(max_depth=2, workspace_dir="./boids-sim-workspace")

graph = agent.start(TASK)

while not graph.finished:

graph = agent.step(graph)

A generated boids simulation actually built by the RLM:

You can view actual generated code here:

boids-sim-workspace/click to collapse

- context/

- root/

- context.txt

- context_metadata.json

- root.boid/

- context.txt

- context_metadata.json

- root.css/

- context.txt

- context_metadata.json

- root.html/

- context.txt

- context_metadata.json

- root.main/

- context.txt

- context_metadata.json

- root.state/

- context.txt

- context_metadata.json

- root.view/

- context.txt

- context_metadata.json

- root/

- frames/

- step_00.png

- step_01.png

- step_02.png

- step_03.png

- step_04.png

- step_05.png

- step_06.png

- step_07.png

- step_08.png

- step_09.png

- step_10.png

- step_11.png

- step_12.png

- step_13.png

- step_14.png

- step_15.png

- step_16.png

- step_17.png

- step_18.png

- scripts/

- boid.js

- main.js

- state.js

- view.js

- session/

- root/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.boid/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.css/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.html/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.main/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.state/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.view/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root/

- graph.json

- index.html

- style.css

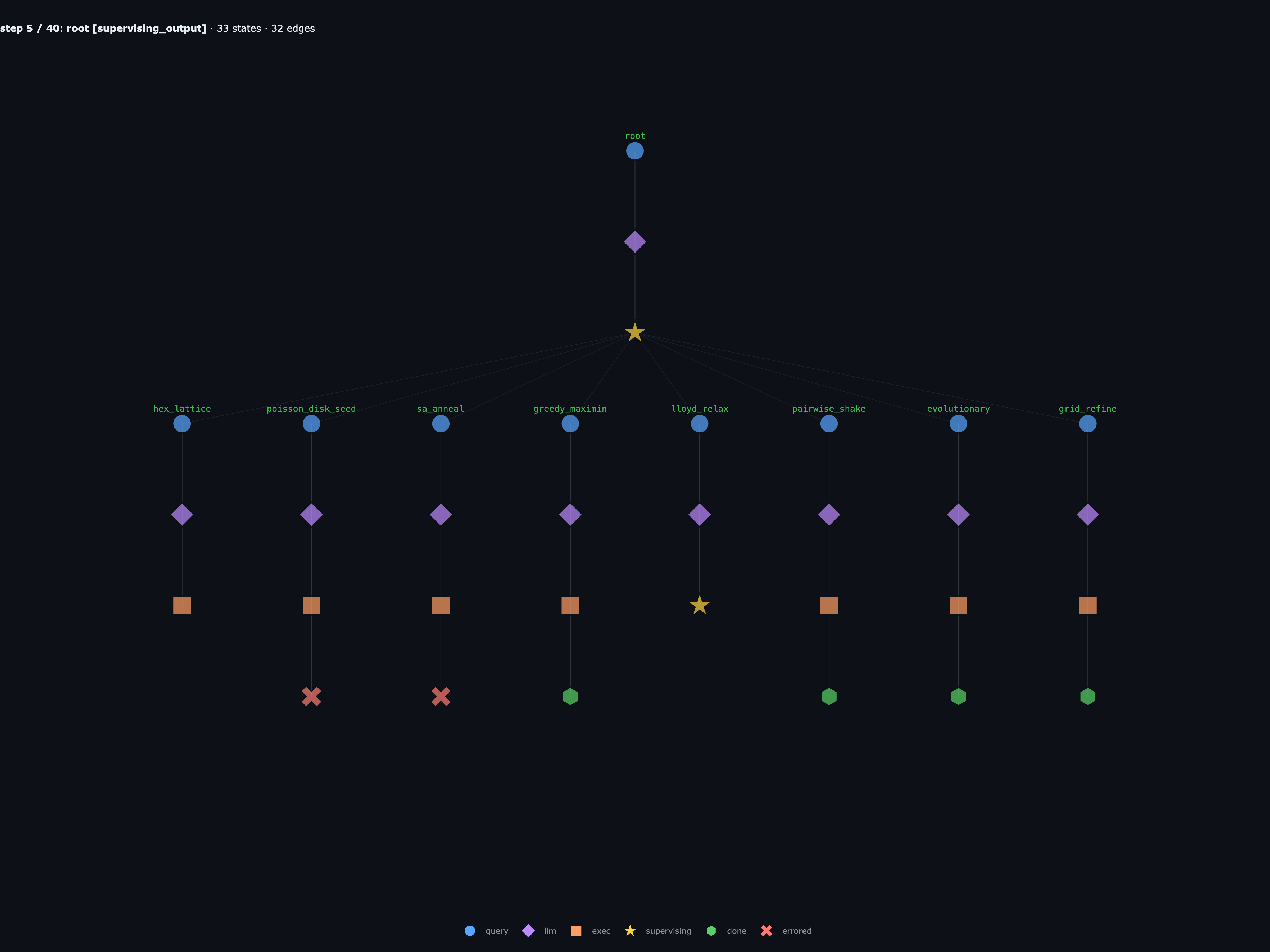

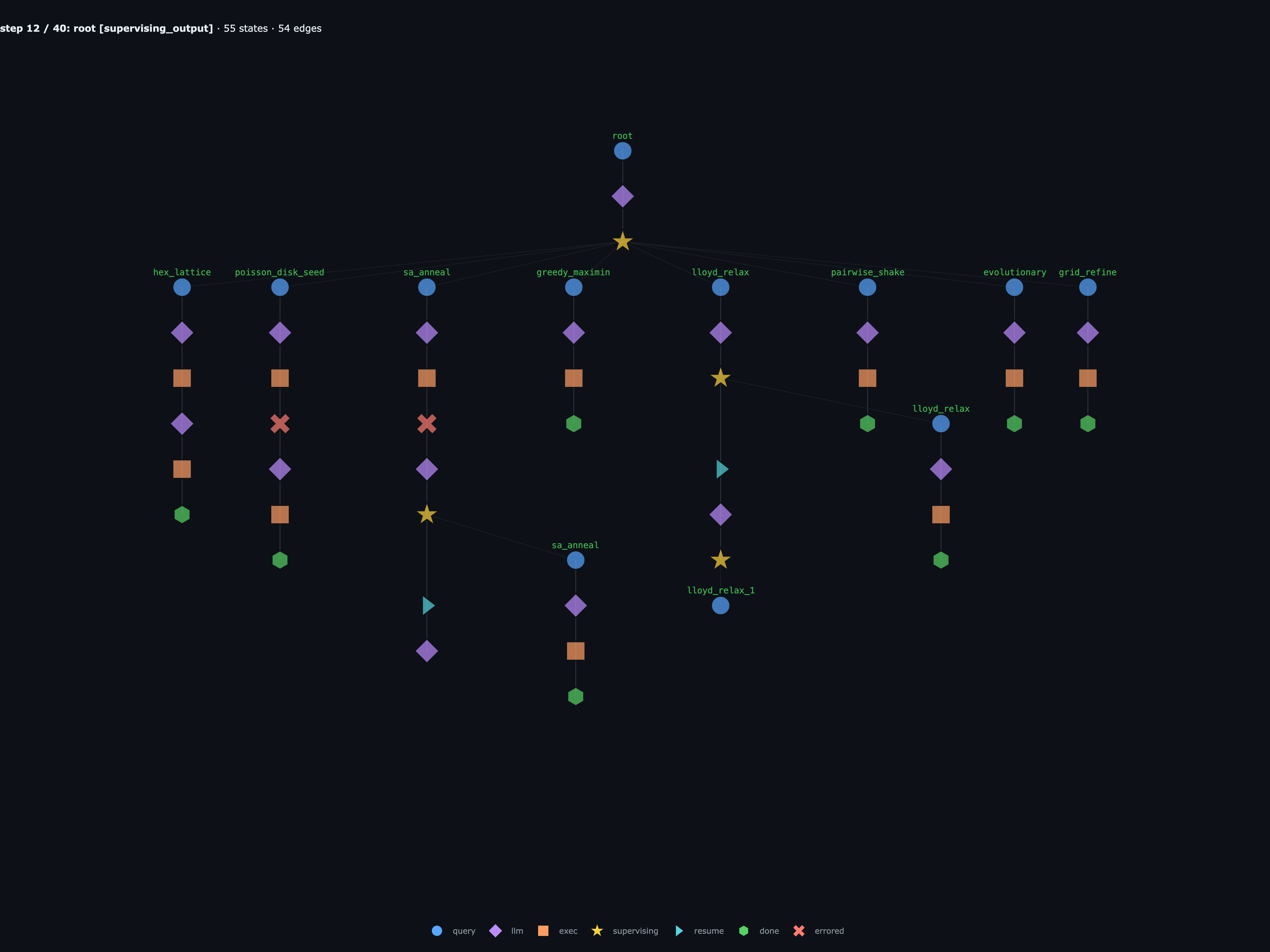

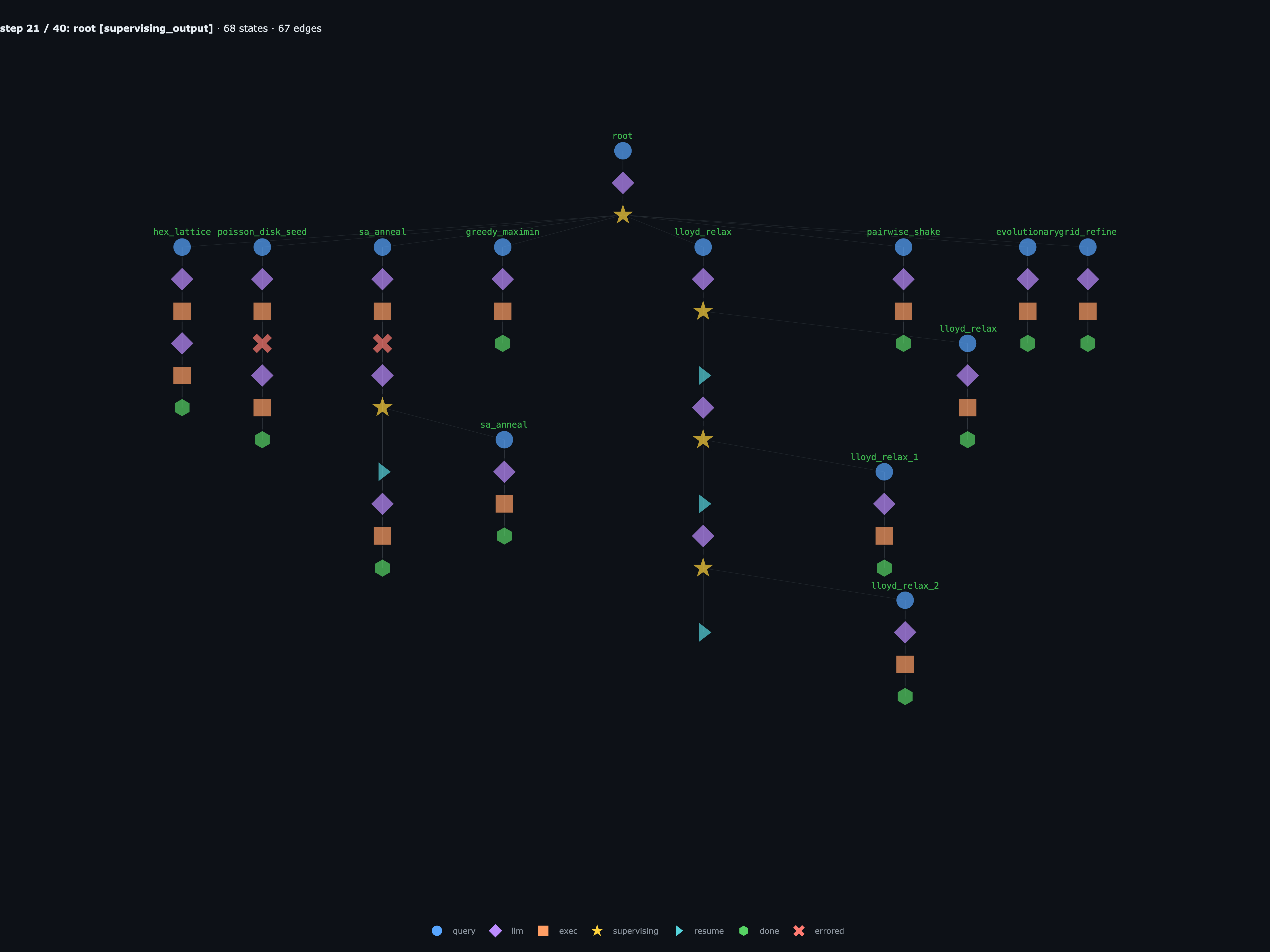

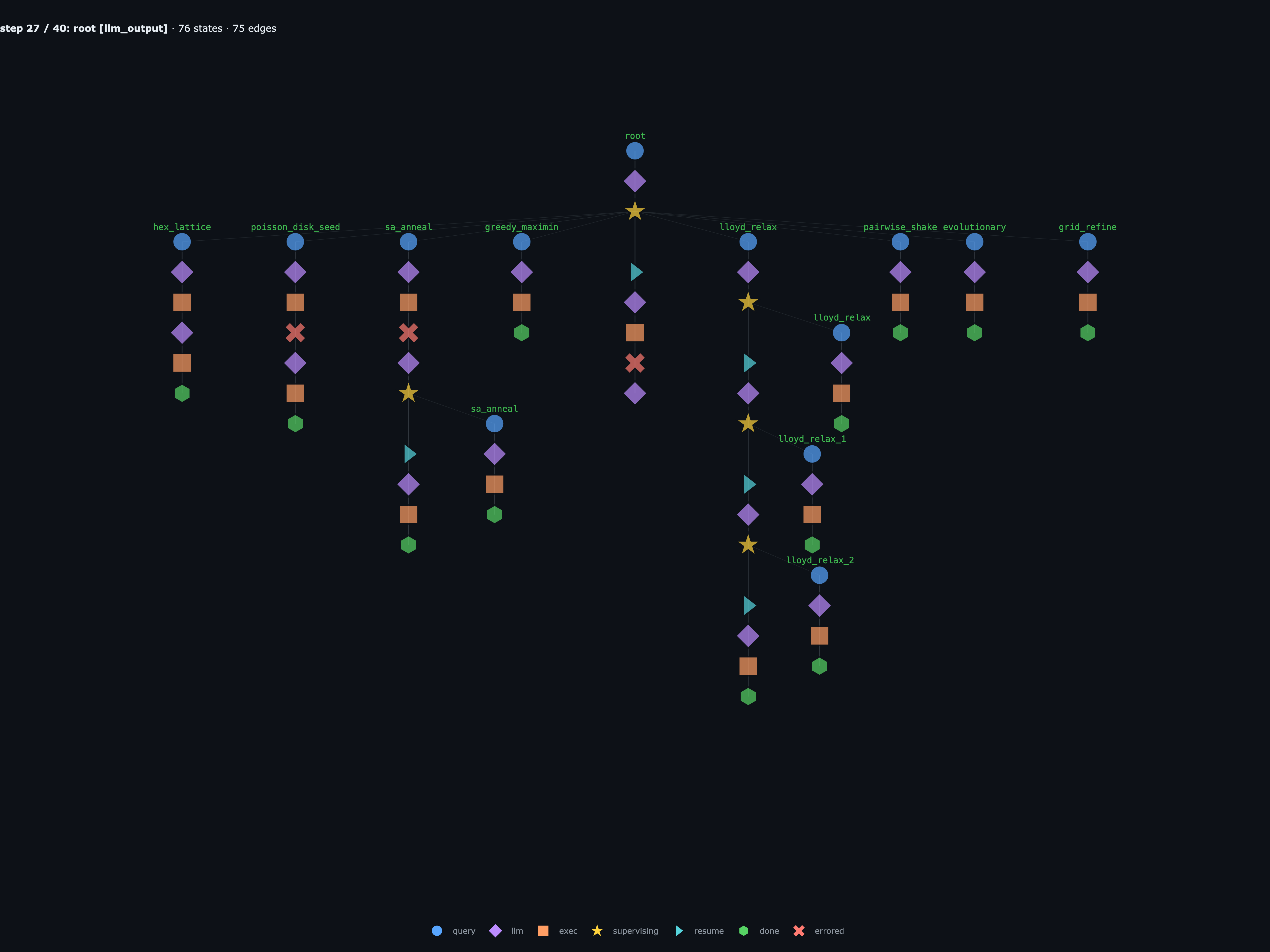

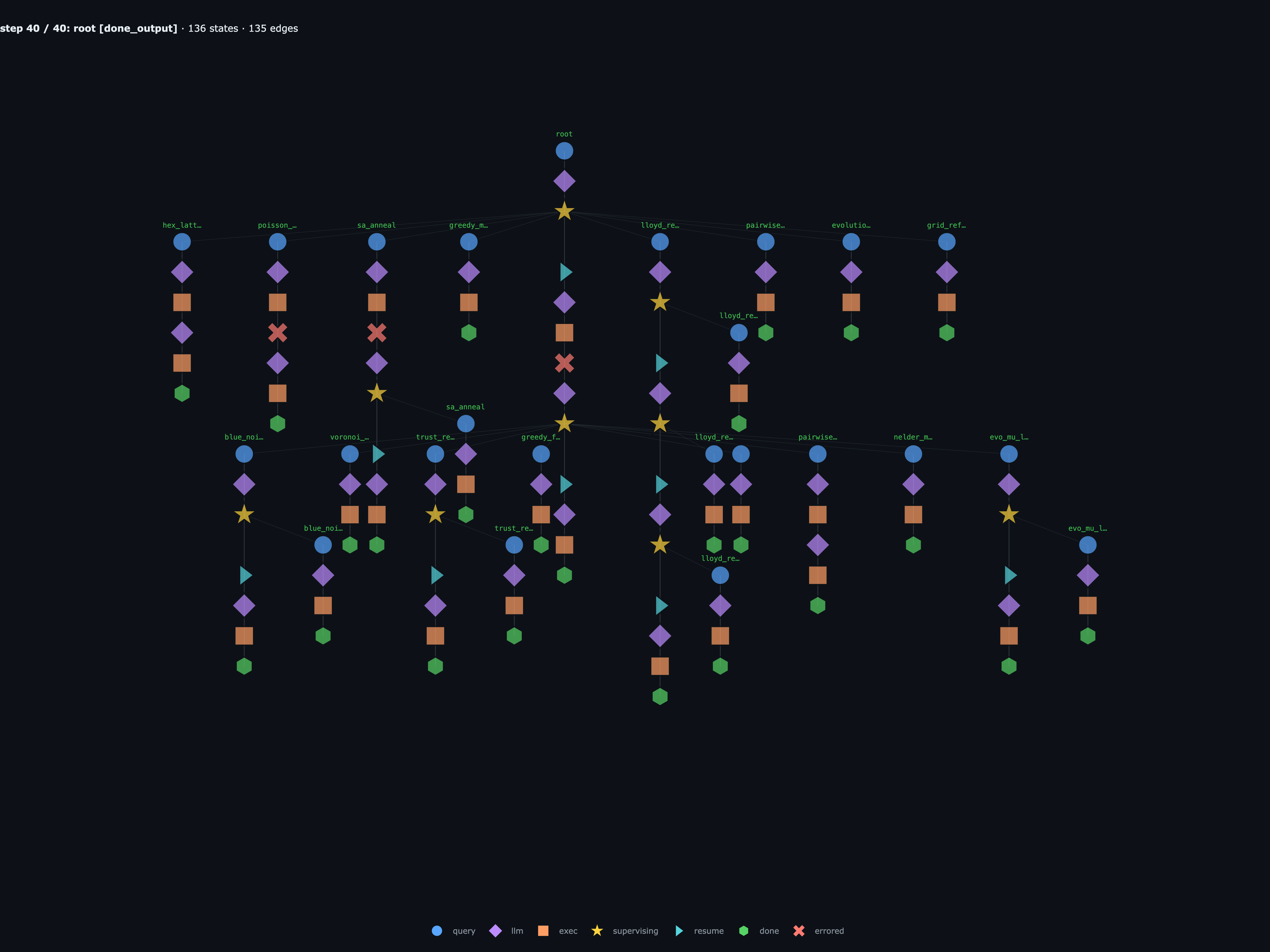

The recursive graph, with the important highlighted steps of the run (slide titles map back to the actual step number in the trace, so e.g. Step 7 / 18 is the seventh step(graph) call, not the seventh slide):

Recursive autoresearcher

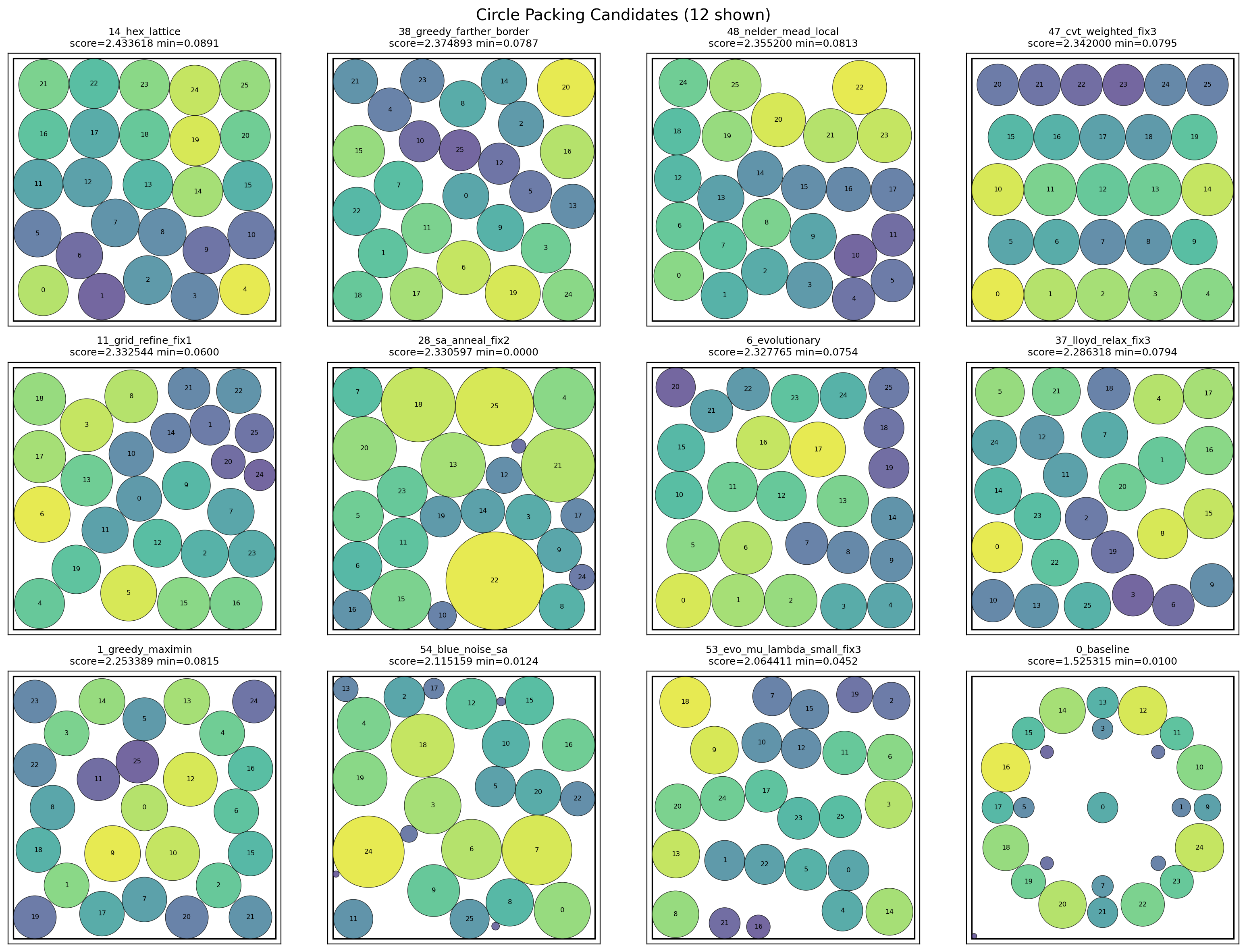

The same recursive structure is useful well beyond simple coding agent loops. Inspired by Karpathy’s autoresearch, this example turns the agent into a researcher that hill-climbs a hand-rolled benchmark. The parent picks hypotheses, delegates one child per hypothesis to write and run a full candidate, and uses each child’s score to decide what to try next.

The target is a classic geometry problem: pack 26 non-overlapping circles in the unit square and maximize the sum of radii. The baseline solve() (two concentric rings + greedy radius scaling) scores about 1.525. The known optimum is around 2.635. Only numpy and the stdlib are allowed, so children have to hand-roll their algorithms.

autoresearch.py is a ~600-line rlmflow example that wires the parent/child loop, the run_experiment / run_baseline / submission_status tools, and the ledger. Pointed at the circle_packing target it runs as:

python autoresearch.py \

--target circle_packing \

--model gpt-5 \

--branches-per-turn 8 \

--child-iterations 3 \

--max-iterations 40 \

--max-submissions 64 \

--budget-s 45 \

--max-depth 2

The wiring is the same rlm_delegate + await rlm_wait pattern, but each child rewrites a single function (solve() in solution.py), passes the new source to run_experiment(source, description=slug), and reads back a numeric score. A separate evaluate.py — which the agent never sees — imports each candidate, validates the geometry, and prints score: <float>.

This particular run produced 65 ledger entries across two waves of eight children each. The best valid trial was hex_lattice at score 2.4336 (+60% over the 1.525 baseline, ~92% of the known optimum), with seven other algorithm families clustered above 2.32:

You can browse the full workspace — the driver, the per-slug source files in history/, the ledger, and the per-agent session logs:

autoresearch/click to collapse

- circle_packing/

- README.md

- REPORT.md

- evaluate.py

- plot_circles.py

- program.md

- solution.py

- top11_families_plus_baseline.png

- runs/

- autoresearch/

- __pycache__/

- plot_circles.cpython-311.pyc

- solution.cpython-311.pyc

- context/

- root/

- context.txt

- context_metadata.json

- root.blue_noise_sa/

- context.txt

- context_metadata.json

- root.blue_noise_sa.blue_noise_sa/

- context.txt

- context_metadata.json

- root.cvt_weighted/

- context.txt

- context_metadata.json

- root.evo_mu_lambda_small/

- context.txt

- context_metadata.json

- root.evo_mu_lambda_small.evo_mu_lambda_small/

- context.txt

- context_metadata.json

- root.evolutionary/

- context.txt

- context_metadata.json

- root.greedy_farther_border/

- context.txt

- context_metadata.json

- root.greedy_maximin/

- context.txt

- context_metadata.json

- root.grid_refine/

- context.txt

- context_metadata.json

- root.hex_lattice/

- context.txt

- context_metadata.json

- root.lloyd_relax/

- context.txt

- context_metadata.json

- root.lloyd_relax.lloyd_relax/

- context.txt

- context_metadata.json

- root.lloyd_relax.lloyd_relax_1/

- context.txt

- context_metadata.json

- root.lloyd_relax.lloyd_relax_2/

- context.txt

- context_metadata.json

- root.nelder_mead_local/

- context.txt

- context_metadata.json

- root.pairwise_proj/

- context.txt

- context_metadata.json

- root.pairwise_shake/

- context.txt

- context_metadata.json

- root.poisson_disk_seed/

- context.txt

- context_metadata.json

- root.sa_anneal/

- context.txt

- context_metadata.json

- root.sa_anneal.sa_anneal/

- context.txt

- context_metadata.json

- root.trust_region_barrier/

- context.txt

- context_metadata.json

- root.trust_region_barrier.trust_region_barrier/

- context.txt

- context_metadata.json

- root.voronoi_inflate/

- context.txt

- context_metadata.json

- root/

- frames/

- step_00.png

- step_01.png

- step_02.png

- step_03.png

- step_04.png

- step_05.png

- step_06.png

- step_07.png

- step_08.png

- step_09.png

- step_10.png

- step_11.png

- step_12.png

- step_13.png

- step_14.png

- step_15.png

- step_16.png

- step_17.png

- step_18.png

- step_19.png

- step_20.png

- step_21.png

- step_22.png

- step_23.png

- step_24.png

- step_25.png

- step_26.png

- step_27.png

- step_28.png

- step_29.png

- step_30.png

- step_31.png

- step_32.png

- step_33.png

- step_34.png

- step_35.png

- step_36.png

- step_37.png

- step_38.png

- step_39.png

- step_40.png

- history/

- __pycache__/

- 11_grid_refine_fix1.cpython-311.pyc

- 14_hex_lattice.cpython-311.pyc

- 15_hex_lattice_fix1.cpython-311.pyc

- 16_hex_lattice_fix2.cpython-311.pyc

- 17_hex_lattice_fix3.cpython-311.pyc

- 18_poisson_disk_seed.cpython-311.pyc

- 1_greedy_maximin.cpython-311.pyc

- 28_sa_anneal_fix2.cpython-311.pyc

- 37_lloyd_relax_fix3.cpython-311.pyc

- 38_greedy_farther_border.cpython-311.pyc

- 42_voronoi_inflate.cpython-311.pyc

- 47_cvt_weighted_fix3.cpython-311.pyc

- 48_nelder_mead_local.cpython-311.pyc

- 49_nelder_mead_local_fix1.cpython-311.pyc

- 53_evo_mu_lambda_small_fix3.cpython-311.pyc

- 54_blue_noise_sa.cpython-311.pyc

- 58_trust_region_barrier.cpython-311.pyc

- 5_pairwise_shake_fix3.cpython-311.pyc

- 62_pairwise_proj.cpython-311.pyc

- 6_evolutionary.cpython-311.pyc

- 10_grid_refine.py

- 11_grid_refine_fix1.py

- 12_grid_refine_fix2.py

- 13_grid_refine_fix3.py

- 14_hex_lattice.py

- 15_hex_lattice_fix1.py

- 16_hex_lattice_fix2.py

- 17_hex_lattice_fix3.py

- 18_poisson_disk_seed.py

- 19_poisson_disk_seed_fix1.py

- 1_greedy_maximin.py

- 20_poisson_disk_seed_fix2.py

- 21_poisson_disk_seed_fix3.py

- 22_lloyd_relax.py

- 23_lloyd_relax_fix1.py

- 24_lloyd_relax_fix2.py

- 25_lloyd_relax_fix3.py

- 26_sa_anneal.py

- 27_sa_anneal_fix1.py

- 28_sa_anneal_fix2.py

- 29_sa_anneal_fix3.py

- 2_pairwise_shake.py

- 30_lloyd_relax.py

- 31_lloyd_relax_fix1.py

- 32_lloyd_relax_fix2.py

- 33_lloyd_relax_fix3.py

- 34_lloyd_relax.py

- 35_lloyd_relax_fix1.py

- 36_lloyd_relax_fix2.py

- 37_lloyd_relax_fix3.py

- 38_greedy_farther_border.py

- 39_greedy_farther_border_fix1.py

- 3_pairwise_shake_fix1.py

- 40_greedy_farther_border_fix2.py

- 41_greedy_farther_border_fix3.py

- 42_voronoi_inflate.py

- 43_voronoi_inflate_fix1.py

- 44_cvt_weighted.py

- 45_cvt_weighted_fix1.py

- 46_cvt_weighted_fix2.py

- 47_cvt_weighted_fix3.py

- 48_nelder_mead_local.py

- 49_nelder_mead_local_fix1.py

- 4_pairwise_shake_fix2.py

- 50_evo_mu_lambda_small.py

- 51_evo_mu_lambda_small_fix1.py

- 52_evo_mu_lambda_small_fix2.py

- 53_evo_mu_lambda_small_fix3.py

- 54_blue_noise_sa.py

- 55_blue_noise_sa_fix1.py

- 56_blue_noise_sa_fix2.py

- 57_blue_noise_sa_fix3.py

- 58_trust_region_barrier.py

- 59_trust_region_barrier_fix1.py

- 5_pairwise_shake_fix3.py

- 60_trust_region_barrier_fix2.py

- 61_trust_region_barrier_fix3.py

- 62_pairwise_proj.py

- 63_pairwise_proj_fix1.py

- 64_pairwise_proj_fix2.py

- 6_evolutionary.py

- 7_evolutionary_fix1.py

- 8_evolutionary_fix2.py

- 9_evolutionary_fix3.py

- ledger.jsonl

- __pycache__/

- session/

- root/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.blue_noise_sa/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.blue_noise_sa.blue_noise_sa/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.cvt_weighted/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.evo_mu_lambda_small/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.evo_mu_lambda_small.evo_mu_lambda_small/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.evolutionary/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.greedy_farther_border/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.greedy_maximin/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.grid_refine/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.hex_lattice/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.lloyd_relax/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.lloyd_relax.lloyd_relax/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.lloyd_relax.lloyd_relax_1/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.lloyd_relax.lloyd_relax_2/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.nelder_mead_local/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.pairwise_proj/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.pairwise_shake/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.poisson_disk_seed/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.sa_anneal/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.sa_anneal.sa_anneal/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.trust_region_barrier/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.trust_region_barrier.trust_region_barrier/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root.voronoi_inflate/

- agent.json

- latest.json

- session.jsonl

- transcript.json

- root/

- README.md

- REPORT.md

- evaluate.py

- graph.json

- plot_circles.py

- program.md

- solution.py

- top11_plus_baseline.png

- viewer.html

- __pycache__/

- autoresearch/

- .DS_Store

- README.md

- autoresearch.py

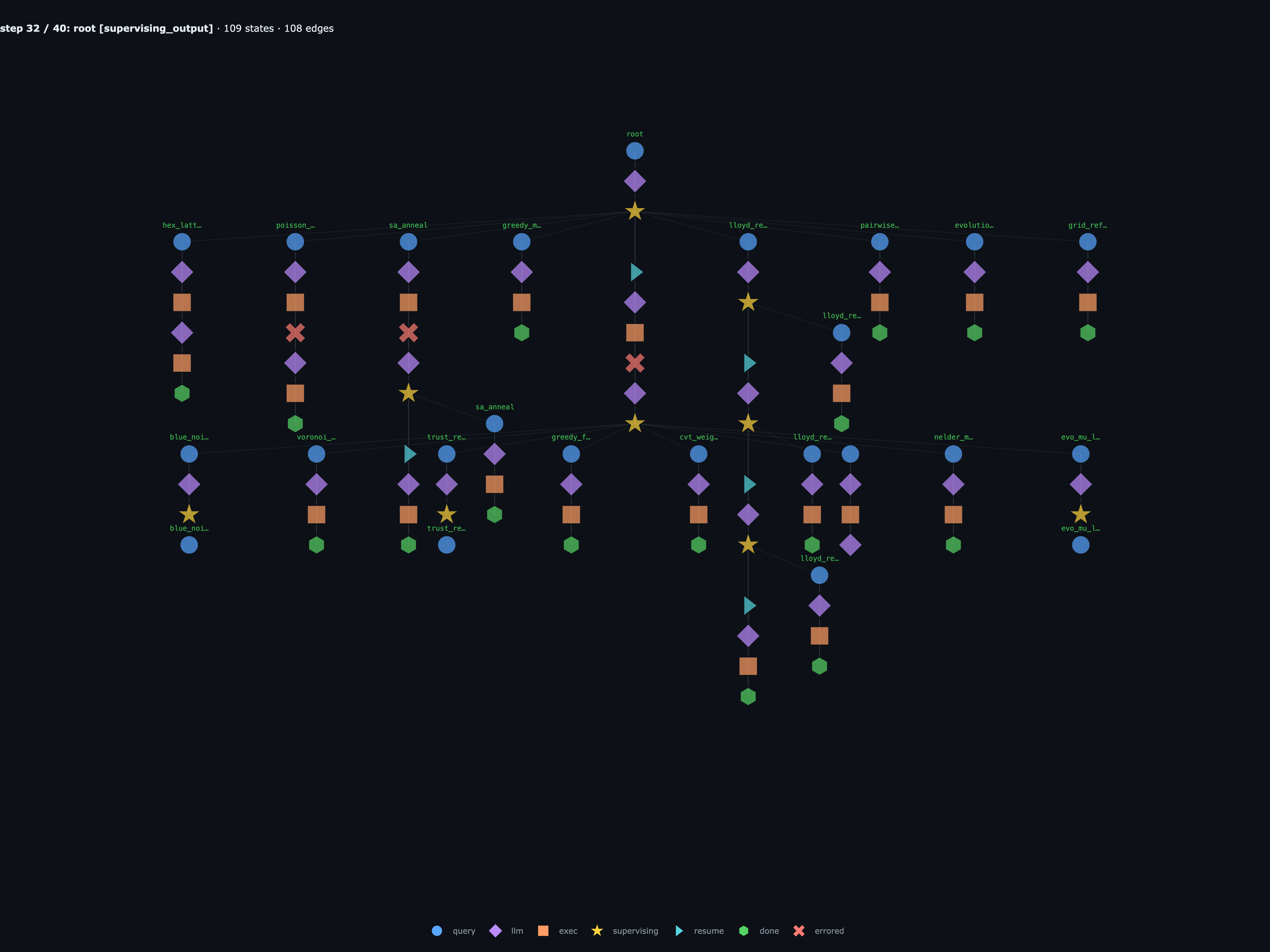

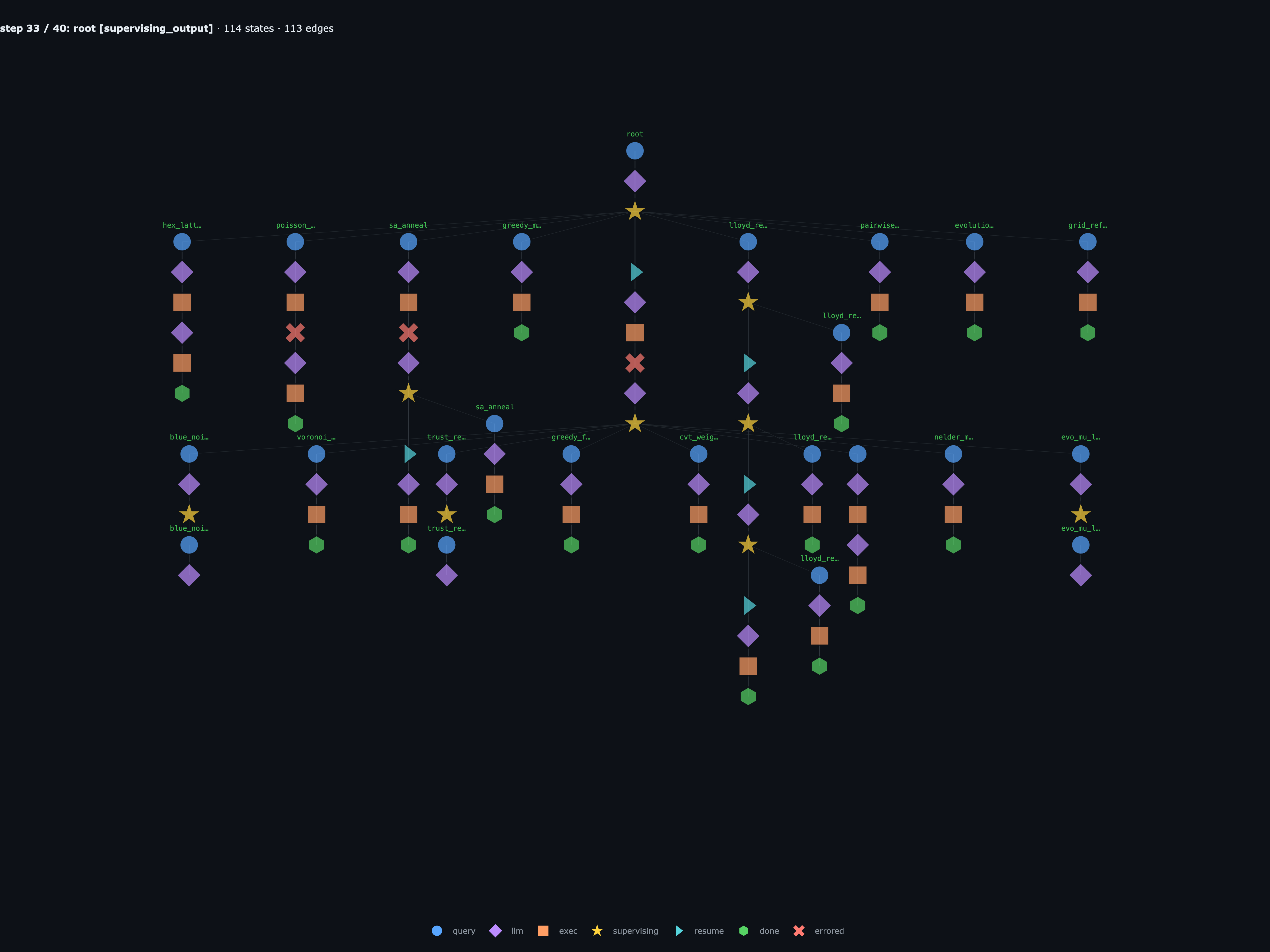

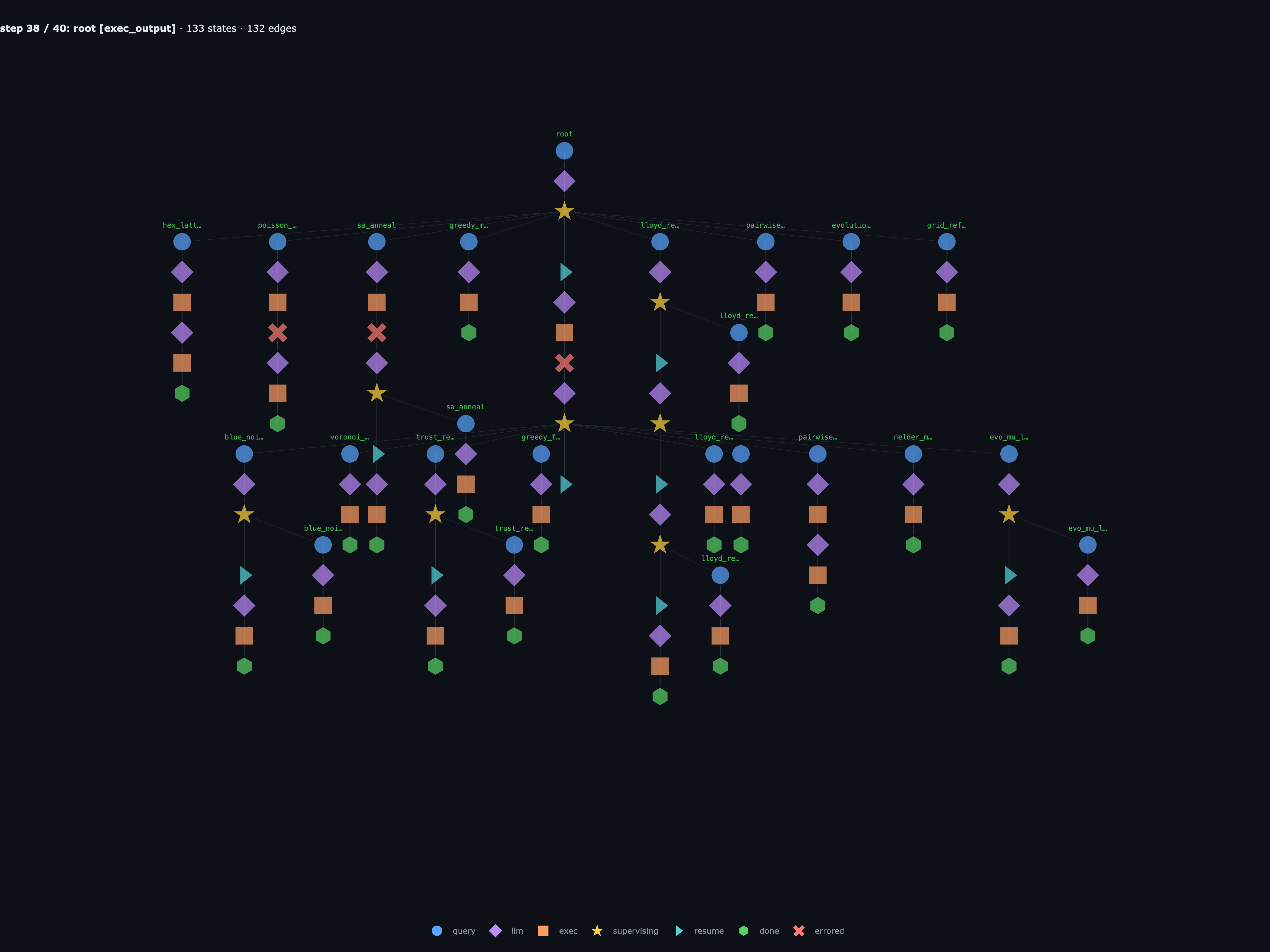

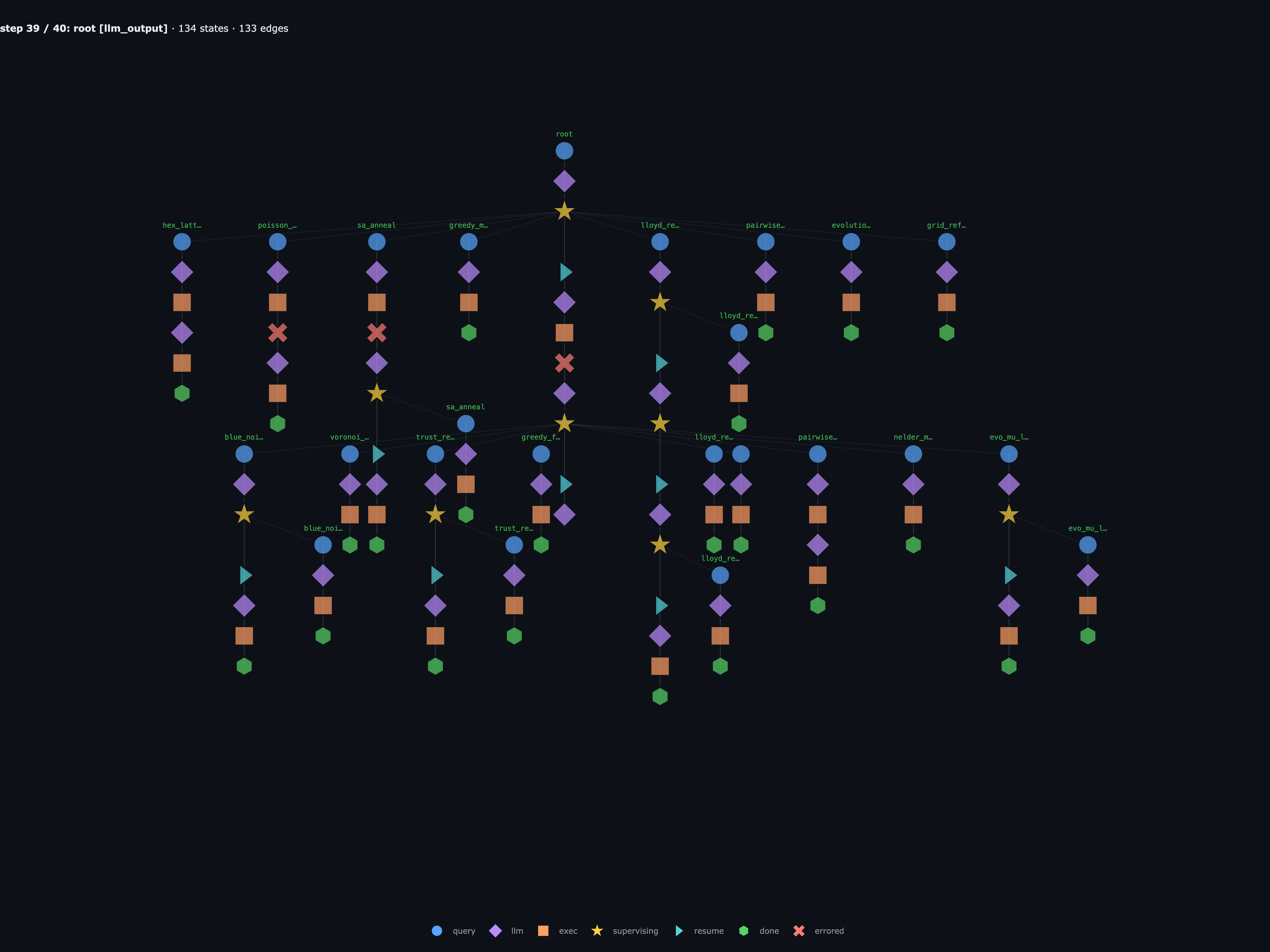

And the execution graph itself, with the important highlighted steps of the run, where each slide is labeled by its real step in the full 41-step trace (so e.g. Step 27 / 40 is the actual root-level exec_exception and not the 10th slide of a curated deck):

The graph captures the entire research session: every hypothesis the parent tried, every candidate source string each child wrote, every grandchild a child spawned to explore a sub-variant, and every error along the way (two child crashes that the engine fed back as _fix1, plus one root-level exec_exception while staging wave 2). This is where the graph matters in practice: the failed source, traceback, parent recovery step, and final score all live in the same run object. The ledger and the graph together make the whole run reproducible from any node.

Extra features

Graph manipulation

With rlmflow's graph structure, you can:

Inspect one agent without rereading every sibling’s messages:

from rlmflow.utils.viz import message_stream print(message_stream("root.chunk_2", graph))Replay from a saved workspace instead of starting the whole run over. The workspace directory is the durable run, so you reopen it and keep stepping:

workspace = Workspace.open_path("./myproject") graph = workspace.load_graph() while not graph.finished: graph = agent.step(graph)Fork into an isolated workspace and try a different model or prompt:

alt_ws = workspace.fork(new_branch_id="alt", new_dir="./runs/alt") alt_agent = RLMFlow(llm_client=OpenAIClient("gpt-5-mini"), workspace=alt_ws, ...) alt_graph = alt_ws.load_graph() while not alt_graph.finished: alt_graph = alt_agent.step(alt_graph)Edit a branch by patching a bad state before continuing:

bad = graph.nodes.where(type="done_output", agent_id="root.chunk_2")[0] graph.nodes.update(bad.id, result="84721", content="84721") graph = agent.step(graph) # parent resumes from the patched graph

DSPy integration

There is also a lightweight dspy wrapper, mostly so existing DSPy programs can use an rlmflow agent as their language model while keeping the same graph-backed trace:

from rlmflow import OpenAIClient, RLMConfig, RLMFlow, Workspace

from rlmflow.integrations.dspy import RLMFlowLM

from rlmflow.runtime.local import LocalRuntime

workspace = Workspace.create(Path(__file__).parent / "example-workspaces" / "dspy-workspace")

agent = RLMFlow(

llm_client=OpenAIClient(model="gpt-4o-mini"),

runtime=LocalRuntime(workspace=workspace),

config=RLMConfig(max_depth=1, max_iterations=5),

)

dspy.configure(lm=RLMFlowLM(agent, model="rlmflow/gpt-4o-mini"))

qa = dspy.ChainOfThought("question -> answer")

result = qa(question="What is 17 * 23? Show a short calculation.")

print(result.answer)

Check out the rest of the features in the repo.

Conclusion

RLMs handle long contexts by spawning sub-agents recursively. Even though this is an elegant and effective approach, it can be a black box: sub-agent calls become opaque and hard to observe or control. rlmflow solves this by representing the recursive structure as a graph, where you can step through parallel work, replay from checkpoints, and fork or edit nodes.

As LLMs get better at coding, strict agent harnesses become less important. RLMs let the model decide how to view and manipulate context, when to delegate pieces of it to sub-agents, and how to combine the results, all through the same clean coding interface. rlmflow is for people building long-context agents, recursive coding agents, and research loops where the trace should not just be inspectable, but something you can replay, fork, edit, and continue from.

Try it: https://github.com/shyamsn97/rlmflow.

Acknowledgements

Thanks to Alex Zhang and Omar Khattab for the original RLM work. I really think it’s going to be one of the most important ideas for building LLM agents.

The ypi project for its super clean interface and prompts.

Citation

@misc{sudhakaran2026rlmflow,

author = {Sudhakaran, Shyam},

title = {Recursive Language Models are Graphs},

year = {2026},

howpublished = {\url{https://shyamsn97.github.io/blog/rlmflow/}}

}

References

Zhang, A. and Khattab, O. Recursive Language Models. Blog post, 2025. URL: https://alexzhang13.github.io/blog/2025/rlm/. Paper: https://arxiv.org/abs/2512.24601. Code: https://github.com/alexzhang13/rlm-minimal.

Prime Intellect. Recursive Language Models in

verifiers. Blog post, 2025. URL: https://www.primeintellect.ai/blog/rlm.Anthropic. Context Rot. 2025. URL: https://www.anthropic.com/news/context-rot.

Chroma Research. Context Rot: How Increasing Input Tokens Impacts LLM Performance. 2025. URL: https://research.trychroma.com/context-rot.

Hsieh, C.-P., Sun, S., Kriman, S., Acharya, S., Rekesh, D., Jia, F., Zhang, Y., and Ginsburg, B. RULER: What’s the Real Context Size of Your Long-Context Language Models? 2024. URL: https://arxiv.org/abs/2404.06654.

OOLONG: Evaluating LLMs on Long-Context Tasks. 2025. URL: https://github.com/oolong-bench/oolong.

Liu, N. F., Lin, K., Hewitt, J., Paranjape, A., Bevilacqua, M., Petroni, F., and Liang, P. Lost in the Middle: How Language Models Use Long Contexts. 2023. URL: https://arxiv.org/abs/2307.03172.

Sun, W., Lu, M., Ling, Z., Liu, K., Yao, X., Yang, Y., and Chen, J. Scaling Long-Horizon LLM Agent via Context-Folding. 2025. URL: https://arxiv.org/abs/2510.11967.

Sutton, R. S. The Bitter Lesson. 2019. URL: http://www.incompleteideas.net/IncIdeas/BitterLesson.html.

Reynolds, C. W. Flocks, Herds, and Schools: A Distributed Behavioral Model. SIGGRAPH ‘87, 1987. URL: https://www.red3d.com/cwr/boids/.

ypi: a recursive coding agent. 2025. URL: https://github.com/rawwerks/ypi.